The governance frameworks every deployment must satisfy

For UK public sector organisations, this means aligning every AI deployment to core governance frameworks. These include UK GDPR, WCAG 2.2, ISO 42001 and ISO 27001, GDS Service Standards, NCSC Cyber Essentials, the OECD AI Principles, and the 10 principles of the UK Government AI Playbook.

Together these frameworks cover data protection, AI management systems, ethical standards and operational practice. They do not operate in isolation. A compliant AI deployment satisfies all of these simultaneously.

Find out what the governance foundation looks like in practice

Why AI governance fails in practice

AI governance fails when it is treated as a compliance exercise rather than an operational requirement. The pattern is consistent across public sector organisations: AI tools are selected, pilots begin, and governance assessments are initiated after deployment has already started. At that point, governance becomes an obstacle rather than an enabler.

The consequences are predictable. Deployments stall in approval processes. Data protection officers raise concerns about processing that was never assessed at the design stage. IT and security leads identify risks that cannot be mitigated retrospectively. Projects are paused or abandoned. The organisation loses confidence in AI as a viable path forward.

The risk is not theoretical. Public sector AI deployments are subject to scrutiny from the Information Commissioner's Office, internal audit teams, elected members and the public. An AI decision that cannot be explained, audited or defended is a liability. The organisation, not the platform, carries that liability by default.

Governance infrastructure embedded at the platform level

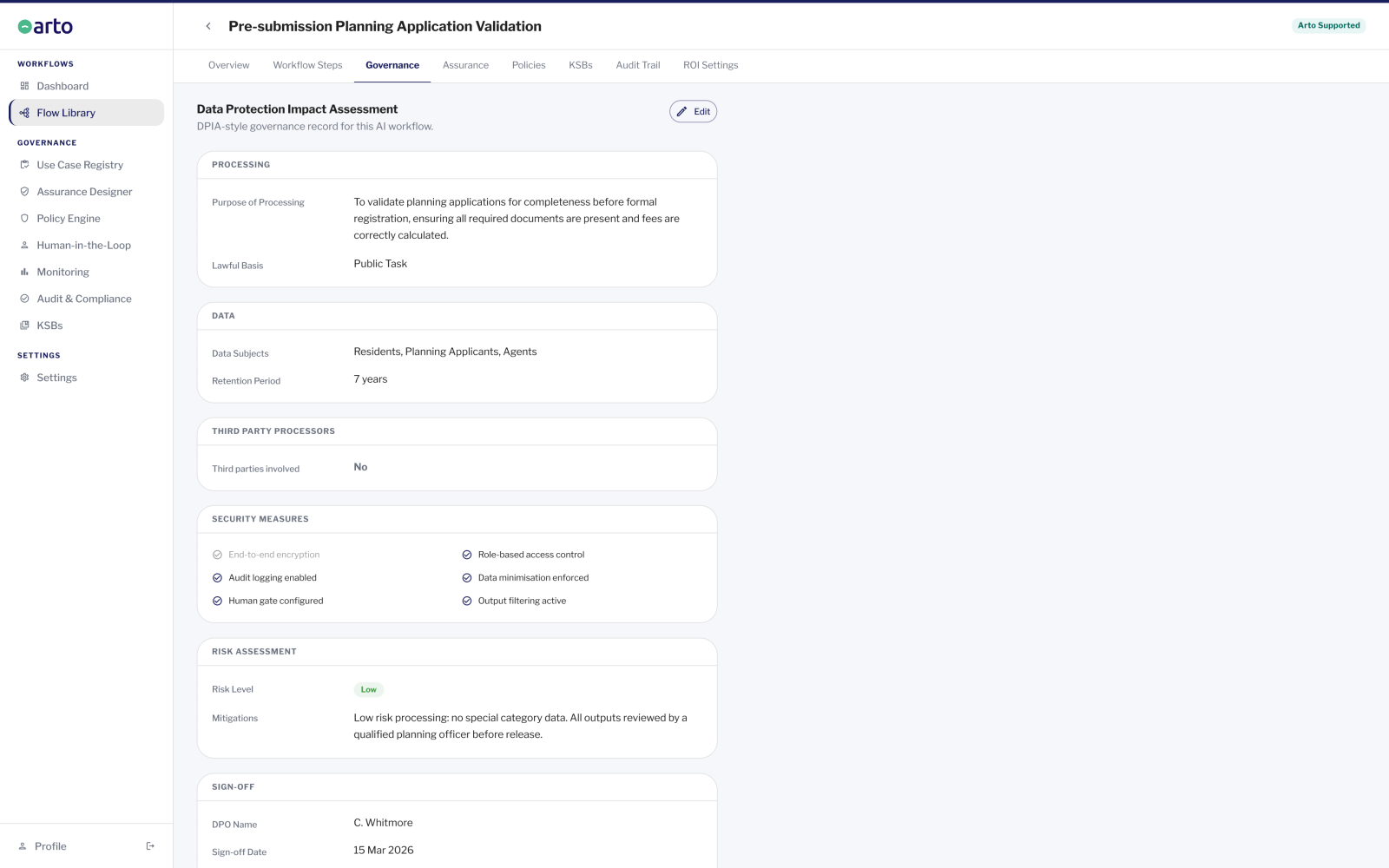

Arto is built governance-first. Every workflow created or deployed on the platform automatically inherits a governance framework built on all four core compliance standards. There is no separate configuration step. There is no governance layer to add afterwards. The compliance infrastructure is the platform.

Arto Supported Flows are designed and built to align with key standards including ISO 27001, ISO 42001 and UK GDPR. An assurance case aligned to the 10 GDS AI Playbook principles is pre-populated and ready to deploy. The audit trail is immutable, timestamped and exportable for ICO review, legal challenge or internal audit. Human oversight is enforced by default: every AI decision that requires approval has a mandatory human-in-the-loop gate before it can proceed.

The result is a controlled, auditable system. Governance teams can assess and approve deployments with confidence. Service teams can operate workflows without requiring technical expertise in compliance. Leadership has a complete, verifiable record of every AI decision the organisation has made.

Four frameworks every public sector AI deployment must satisfy

UK public sector AI deployments are required to align to four core governance frameworks. Each covers a distinct dimension of responsible AI. Arto embeds all four at the platform level, so every workflow satisfies every framework automatically.

UK GDPR

Governs how personal data is processed in AI workflows. Covers lawful basis, data minimisation, DPIAs and the right to explanation under Article 22.

Full UK GDPR guidanceISO 42001

AI management systemsThe international standard for governing AI responsibly. Covers risk assessment, human oversight, transparency and continual improvement.

Full ISO 42001 guidanceOECD AI PRINCIPLES

Ethical AI frameworkFive internationally agreed principles adopted by the UK. Covers inclusive growth, human-centred values, transparency, robustness and accountability.

Full OECD AI guidanceUK GOV AI PLAYBOOK

Operational standards10 principles for responsible AI use in public services. Not legally binding but the expected standard of practice and increasingly referenced in procurement.

Full UK AI Playbook guidanceWCAG 2.2 accessibility standards also apply to all public sector AI deployments. Arto embeds WCAG compliance at the platform level. Accessibility requirements are addressed within the compliance and data security sections of this site.

What a governed AI deployment looks like in practice

A governed AI deployment on Arto operates within a defined policy boundary. The AI agent involved is mapped to a real public sector role, with predefined knowledge, skills and behaviours that determine what it can and cannot do. It cannot act outside those boundaries.

-

Every Arto Supported Flow is designed and built to align with key standards including ISO 27001, ISO 42001 and UK GDPR. Human-in-the-loop gates enforce oversight at defined decision points.

It verifies that the data being processed is within the permitted scope, that the AI agent is operating within its defined role, and that the output meets the policy requirements for that workflow. If a check fails, the workflow does not proceed.

-

Where a decision requires human approval, the workflow pauses at a human-in-the-loop gate.

The responsible officer reviews the AI output, confirms the decision and provides a digital sign-off. That sign-off is timestamped, attributed and sealed permanently in the audit trail. It cannot be altered after the fact.

-

The audit trail records what was processed, who approved what and what output was produced.

The audit trail records what was processed, who approved what and what output was produced. It is available immediately for ICO review, legal challenge, procurement assessment or internal audit.

Where to go next

A step-by-step guide to building governance into an AI deployment in your organisation.

How to apply AI governanceHow Arto embeds all four compliance frameworks at the platform level, and what that means for your deployments.

The AI Governance FoundationHow every AI decision is recorded, attributed and made available for review at any time.

Audit trailHow resident data is processed, where it is stored and why it never leaves the UK.

Data securityWhat a Data Protection Officer needs to assess an AI deployment and how Arto makes that process straightforward.

DPO sign-offHow to maintain centralised visibility and control of all AI activity across your organisation.

Governance hubAssess whether Arto meets your governance requirements

Speak with a member of the Arto governance team. We will walk you through the compliance framework, the audit trail architecture and the data processing approach in detail. We can provide documentation for ICO review, DPO assessment or internal procurement.

-

Speak to our team about your governance challenges

-

Discover more about AI governance