The four frameworks are: UK GDPR, which governs how personal data is processed in AI workflows; ISO 42001, the international standard for AI management systems; the OECD AI Principles, which set the internationally agreed ethical framework for responsible AI; and the 10 principles of the UK Government AI Playbook, which translate those international principles into operational guidance for UK public services.

Together these frameworks cover data protection law, AI-specific risk management, ethical principles and operational practice. An AI deployment in UK public sector that satisfies all four simultaneously is demonstrably governed, auditable and defensible.

Why all four frameworks apply to every AI deployment

A common question from organisations beginning their AI governance work is whether they need to comply with all four frameworks or whether satisfying one is sufficient. The answer is that each framework covers ground that the others do not.

UK GDPR is the data protection law.

It governs what data can be processed, on what legal basis, with what safeguards. It is not about AI specifically, it is about personal data. But because most public sector AI workflows process personal data, UK GDPR applies to nearly every deployment.

ISO 42001 is the AI-specific management standard.

It covers how AI systems are governed as systems: risk assessment, human oversight, transparency of AI reasoning, continual improvement. It fills the gap that ISO 27001 (information security) leaves, 27001 does not address AI governance directly.

The OECD AI Principles are the internationally agreed ethical baseline.

They cover the broader societal obligations of AI: inclusive growth, human-centred values, accountability. They were adopted by the UK government and form the ethical foundation that the DSIT AI Principles and the UK Government AI Playbook build on.

The UK Government AI Playbook translates the international principles into 10 operational standards for UK public services specifically.

It is the closest thing to a direct regulatory framework for public sector AI, and while it is not yet legally binding, it is already referenced in procurement frameworks and is likely to shape future audit requirements.

An organisation that satisfies UK GDPR but not ISO 42001 has a data protection framework but not an AI management framework. One that follows the AI Playbook but not UK GDPR may be operationally sound but legally exposed. Compliance with all four is the only approach that is simultaneously lawful, technically governed, ethically grounded and operationally defensible.

IN SHORT: Each framework covers ground the others do not. Satisfying all four is the only approach that is lawful, technically governed, ethically grounded and operationally defensible.

The four frameworks UK public sector AI must satisfy

Data protection Law - UK GDPR

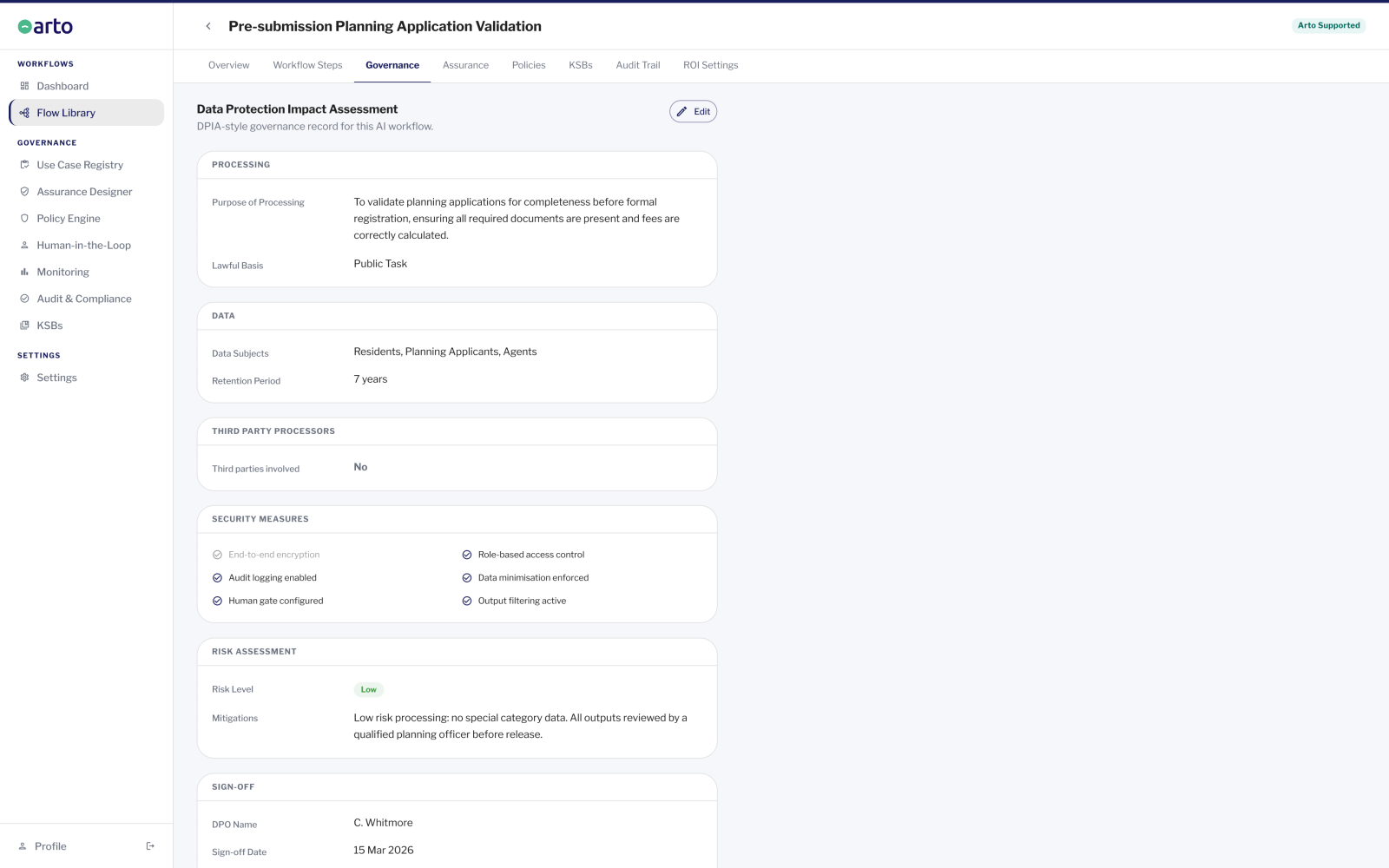

UK GDPR governs how personal data is collected, processed and stored. In the context of AI, it applies wherever a workflow processes personal data about residents, staff or service users, which covers the majority of public sector AI deployments.

Why it matters: UK GDPR is the legal foundation. A deployment that processes personal data without satisfying UK GDPR is unlawful, regardless of how well governed it is in other respects.

Key requirement: Organisations must identify a lawful basis for processing, conduct a data protection impact assessment for high-risk AI processing, apply data minimisation principles and ensure individuals' rights, including the right to explanation under Article 22, are upheld.

ARTO: Data processed through Arto never leaves the UK, is never used to train AI models, and is processed under public task lawful basis with data minimisation controls enforced at platform level.

AI management systems - ISO 42001

ISO 42001 is the international standard for AI management systems. It provides a structured framework for organisations to govern AI as a category of technology: covering risk assessment, human oversight, transparency of AI reasoning and a continual improvement cycle.

Why it matters: ISO 42001 fills the gap left by ISO 27001. Information security standards do not address AI-specific risk. For public sector organisations, ISO 42001 is the primary technical standard for demonstrating that AI is being managed safely and accountably, and it is increasingly referenced in procurement and audit requirements.

Key requirement: Organisations must maintain an AI policy, conduct AI-specific risk assessments, enforce human oversight at defined decision points, ensure AI outputs can be explained and traced, and operate a continual improvement process.

ARTO: Every Arto Supported Flow automatically inherits an AI management framework covering risk assessment, human-in-the-loop oversight gates, transparency of AI reasoning and a full immutable audit record, satisfying the core requirements of ISO 42001 without additional configuration.

Ethical AI framework - OECD AI Principles

The OECD AI Principles are five internationally agreed standards for responsible AI, adopted by the UK government. They set the ethical baseline for AI deployment: inclusive growth and wellbeing, human-centred values and fairness, transparency and explainability, robustness and security, and accountability.

Why it matters: The OECD principles are the international parent of the UK's own AI governance frameworks. The DSIT AI Principles are a direct UK adaptation of the OECD principles, and the UK Government AI Playbook is their operational expression. Understanding the OECD principles means understanding where all UK AI governance guidance comes from.

Key requirement: Organisations must be able to demonstrate that their AI deployments are transparent in how they operate, explainable in the decisions they inform, accountable to the humans affected by them and robust against manipulation or failure.

ARTO: Arto's governance architecture aligns to each of the five OECD principles: transparency through the audit trail, explainability through AI reasoning capture, accountability through human-in-the-loop gates and officer sign-off, robustness through the Monitoring dashboard and anomaly detection.

Full OECD AI Principles guidance

Operational standards - UK Government AI Playbook

The UK Government AI Playbook sets out 10 principles for responsible AI use in public services. It translates the OECD principles and DSIT AI framework into practical operational guidance for UK councils, NHS trusts, government departments and other public bodies.

Why it matters: The AI Playbook is the most directly applicable framework for day-to-day AI deployment decisions in UK public sector. While not yet legally binding, it represents the expected standard of practice and is already referenced in procurement frameworks. It is likely to form the basis of future regulatory requirements.

Key requirement: Organisations should be able to demonstrate compliance with all 10 principles for any AI deployment, covering purpose limitation, human oversight, data governance, transparency, accountability and continual review.

ARTO: Arto builds the AI Playbook principles into its governance framework by default. Each Arto Supported Flow is designed to align with the applicable principles, with the assurance case pre-populated and ready to deploy.

Full UK Government AI Playbook guidance

Accessibility: WCAG 2.2

WCAG 2.2, the Web Content Accessibility Guidelines, also applies to public sector AI deployments. Any AI-generated output, AI-assisted interface or AI-supported service that is accessed by the public or by staff must meet WCAG 2.2 accessibility requirements. This is a legal obligation under the Public Sector Bodies Accessibility Regulations 2018.

Arto embeds WCAG 2.2 compliance at the platform level. It is not configured per workflow, it is part of the governance foundation that every deployment inherits. WCAG requirements are addressed within the AI Governance Foundation and the compliance sections of this site rather than in a separate standard page.

How Arto implements all four frameworks simultaneously

Every Arto Supported Flow automatically inherits a governance framework that satisfies all governance standards simultaneously. There is no compliance checklist to complete before deployment. Six checks run on every single workflow execution, and include verifying data scope (UK GDPR), agent role boundaries (ISO 42001), AI reasoning capture (OECD AI Principles), and oversight structure (UK Government AI Playbook).

The pre-populated assurance case, combined with the immutable audit trail, is the primary evidence a governance team needs to demonstrate compliance with the applicable frameworks to any approval body.

Full guidance on each standard

Each of the four standards has a dedicated page providing full guidance: what it requires, how it applies specifically to UK public sector AI, whether it is mandatory, and how Arto satisfies it by default.

Lawful basis, data minimisation, DPIAs, Article 22 and data residency for public sector AI workflows.

UK GDPR and AIAI management systems, how it differs from ISO 27001, what it requires from a council and how Arto satisfies it.

ISO 42001The five principles, their UK adoption, the OECD to DSIT to AI Playbook lineage, and what each principle means for a council.

OECD AI PrinciplesAll 10 principles explained in plain language, whether they are mandatory and how Arto builds them in.

UK Government AI PlaybookUnderstand how Arto satisfies all four frameworks

Speak with the Arto governance team to walk through how the platform satisfies UK GDPR, ISO 42001, the OECD AI Principles and the UK Government AI Playbook for your specific service area and deployment. We can provide documentation for DPO review, IT security assessment or procurement.

-

Speak to our team about your governance challenges

-

Discover more about AI governance