This page provides information about UK GDPR obligations, not legal advice. For specific legal questions about your AI deployment, consult your Data Protection Officer or legal counsel.

UK GDPR applies to any operation performed on personal data — collecting it, reading it, analysing it, making decisions based on it. AI workflows in local government almost always involve personal data. A workflow that reads a resident's benefits record, analyses a safeguarding referral or processes a planning application is processing personal data under UK GDPR, regardless of whether any data is stored permanently by the AI system.

This is the most important point to understand: UK GDPR's obligations are triggered by processing, not by permanent storage. An AI system that reads a resident's record and produces an output, then discards that data, has still processed personal data. UK GDPR applies to every step of that process.

For public sector organisations deploying AI, this means five specific requirements apply to every AI workflow that touches personal data. Each is addressed below.

IN SHORT: UK GDPR applies to AI processing of personal data, not just permanent storage. Most public sector AI workflows touch personal data and are therefore subject to UK GDPR from the first operation.

Five UK GDPR requirements that apply to AI in local government

1. Lawful basis for processing

Under UK GDPR, every operation that processes personal data must have a lawful basis. For local government AI workflows, the most applicable basis is public task (Article 6(1)(e)) which covers processing necessary for the performance of a task carried out in the public interest or in the exercise of official authority. This basis applies to most operational AI workflows in councils: processing a benefits claim, triaging a safeguarding referral, validating a planning application, or managing a council tax account are all tasks carried out in the exercise of official authority.

What this means in practice: Identify the lawful basis before deployment begins, not after. For most council AI workflows, public task will apply. Document the basis in the DPIA and in the records of processing activities. Do not rely on consent for operational AI workflows, consent is revocable and inappropriate where individuals have no real choice about whether to interact with a public service.

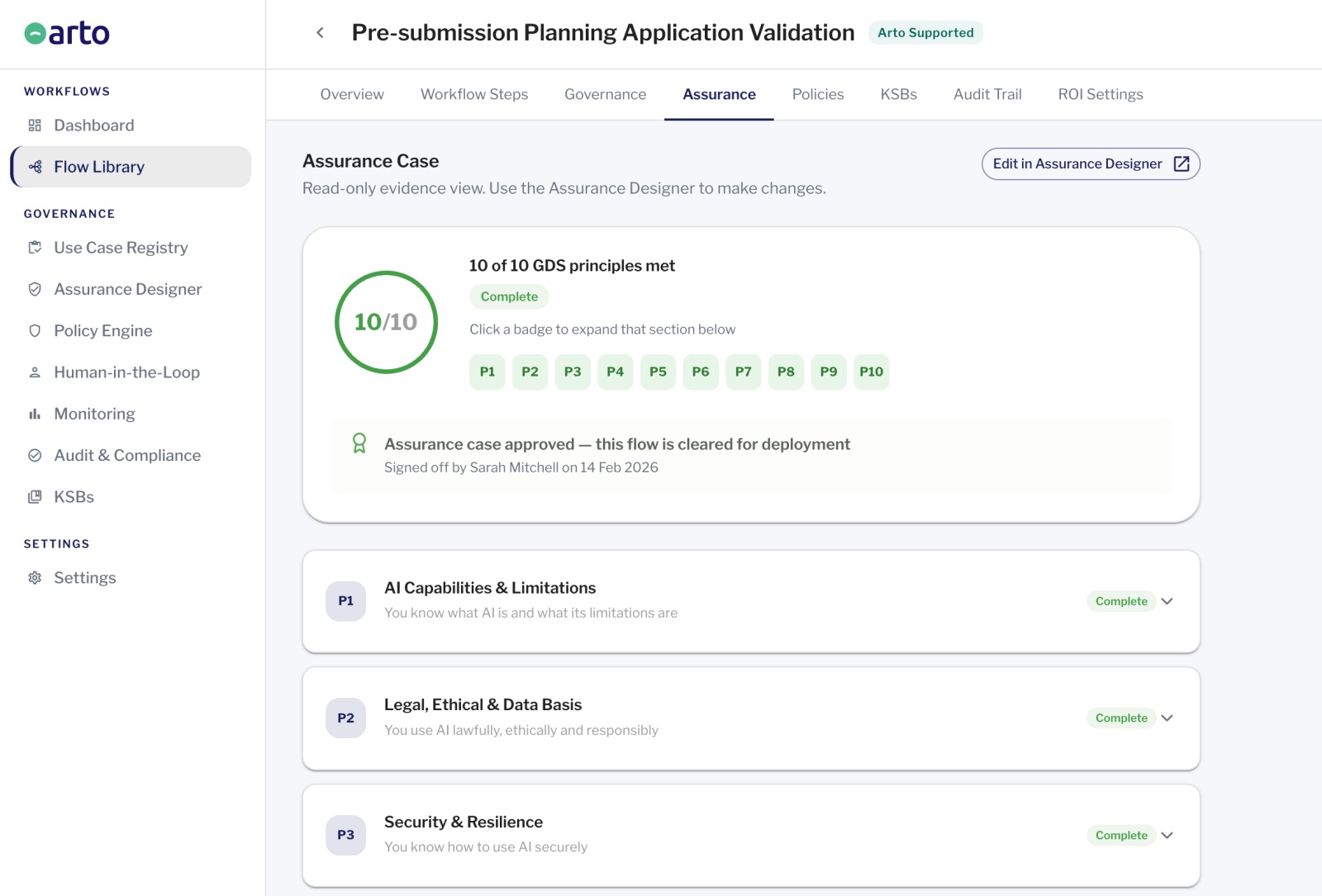

Arto: Arto Supported Flows are designed around public task lawful basis. The Assurance Designer documents the lawful basis for each workflow as part of the pre-deployment governance record. The audit trail records the legal basis applied to each execution.

2. Data minimisation

UK GDPR requires that personal data processed is limited to what is necessary for the specified purpose, no more. For AI workflows, this means the AI agent should only have access to the data it genuinely needs to perform its task. An AI workflow processing change of circumstances notifications does not need access to a resident's full case history. An AI triage tool does not need access to health records unless those records are directly relevant to the triage decision.

What this means in practice: Before deployment, map exactly what data the AI workflow will access. For each data category, confirm it is necessary for the specific purpose. Restrict the AI agent's data access to that defined scope. Document the data minimisation approach in the DPIA. Review the scope if the workflow's purpose changes.

Arto: Data minimisation controls are enforced at platform level in Arto, the AI agent's data access is defined by its KSB profile and cannot be extended without an explicit configuration change. Each workflow accesses only the data defined in its scope. The audit trail records exactly what data was accessed on each run, providing evidence of data minimisation for DPO review.

3. Transparency

Individuals have the right to know when AI is being used to process their personal data and what decisions it informs. For public sector organisations, this means updating privacy notices to reflect AI use, informing residents when their data is processed by AI systems, and being able to explain, in plain language, what the AI does and what role it plays in decisions that affect them.

What this means in practice: Review and update your privacy notices to include AI processing activities before deployment. Ensure your records of processing activities are updated. Be prepared to explain AI involvement to residents on request. The level of explanation required will depend on the significance of the AI's role, a workflow that informs a decision requires more transparent communication than one that automates a purely administrative task.

Arto: Arto's audit trail provides the technical record of AI processing that supports transparency obligations. The audit trail for each run confirms what the AI did and what data it processed. This record is the basis for responding to individual transparency requests or subject access requests relating to AI-assisted decisions.

4. Data Protection Impact Assessments (DPIAs)

A DPIA is required under UK GDPR where processing is 'likely to result in a high risk' to individuals. The ICO's guidance indicates that AI systems processing personal data at scale, systems that make automated decisions with significant effects, and systems processing sensitive personal data are likely to require DPIAs. Most public sector AI workflows in areas like social care, safeguarding and benefits will meet this threshold.

What this means in practice: Conduct a DPIA before any AI workflow processes live personal data. The DPIA should cover: the nature and purpose of the processing, the necessity and proportionality of the AI approach, the risks to individuals, and the measures taken to mitigate those risks. The DPIA should be completed and signed off by the DPO before deployment. Do not treat the DPIA as a post-deployment formality.

Arto: Arto's Assurance Designer pre-populates the evidence base for DPIA supplementary documentation for each Arto Supported Flow. The test run audit trail and Assurance Designer record demonstrate that the technical safeguards documented in the DPIA are in place as specified. This significantly reduces the time required to complete the DPIA for a new Arto deployment.

5. Individual rights

UK GDPR grants individuals several rights relating to their personal data, including the right to access the data held about them, the right to rectification if that data is inaccurate, and, in certain circumstances, the right not to be subject to solely automated decision-making. Councils deploying AI must be able to respond to these rights requests in relation to AI-processed data, which means maintaining records of what data was processed and when.

What this means in practice: Ensure your subject access request process covers AI-processed data. Individuals have the right to request the personal data held about them, including data that was processed by an AI system. Ensure your audit trail is searchable and exportable in a format that allows you to respond to these requests. If the AI workflow informs significant decisions about individuals, ensure your process for explaining those decisions is documented.

Arto: Arto's immutable audit trail is exportable and searchable by individual, by workflow and by date range. This directly supports subject access request responses. The audit trail records what data was processed in relation to each individual's cases, which is the primary information a council needs to respond to an SAR relating to AI-assisted decisions.

Article 22: automated decision-making and what it actually means for AI

Article 22 of UK GDPR gives individuals the right not to be subject to a decision based solely on automated processing if that decision produces a legal effect or similarly significantly affects them. This is the provision that most councils are concerned about when deploying AI.

The key word is 'solely'. Article 22 applies to decisions that are entirely automated — no human involvement in the decision itself. It does not apply to decisions where a human reviews the AI output, applies professional judgement and makes the final determination.

A duty social worker who reviews an AI-generated triage summary and makes the threshold decision is not subject to Article 22 — a human made the decision. An automated system that issues a penalty notice without any human review would be. The distinction is not whether AI was involved, but whether a human was responsible for the decision.

Article 22 does not prohibit AI involvement in decisions. It prohibits solely automated decisions with significant effects. Well-designed public sector AI workflows, where an officer reviews the AI output and makes the final determination, are not solely automated.

When Article 22 does apply

Article 22 would apply if a council deployed an AI system that automatically issues a legal notice, automatically grants or refuses a benefit, automatically imposes a penalty or automatically takes any other action with significant legal effects — without any human review of that specific decision.

Most well-designed public sector AI workflows do not operate this way. They produce outputs — triage summaries, validation reports, draft decisions — that a qualified officer then reviews and acts upon. The officer's review is not a formality; it is the point at which human accountability is exercised.

If a council is deploying a workflow where the AI output is automatically converted into a decision or action without officer review, Article 22 must be considered carefully and legal advice sought.

Article 22 and the right to explanation

Even where Article 22 does not fully apply, individuals have a broader right under UK GDPR to obtain meaningful information about the logic involved in automated processing that significantly affects them. For AI-assisted decisions, this means councils should be able to explain, in plain language, what role the AI played in a decision and what the key factors were.

This is not a technical requirement to expose the AI model's internal workings. It is a practical requirement to be able to tell a resident or service user: this is what our AI system did, this is what it identified, and this is why the officer made the decision they did.

Does UK GDPR require resident data to stay in the UK?

UK GDPR does not automatically require all personal data to remain in the UK. However, it does impose restrictions on international transfers — moving personal data to countries outside the UK — that create significant practical compliance obligations.

Data transferred to countries outside the UK must be protected by an 'adequacy regulation' (countries the UK has determined provide equivalent data protection), a standard contractual clause arrangement, or another approved transfer mechanism. For AI platforms that process personal data on servers outside the UK, these mechanisms must be in place and documented. If they are not, the transfer is unlawful.

Keeping personal data within the UK eliminates these transfer obligations entirely. Data processed and stored on UK infrastructure is not an international transfer under UK GDPR. There are no adequacy regulations to maintain, no SCCs to put in place, and no transfer mechanism documentation required. This significantly simplifies the GDPR compliance position for any AI deployment.

For councils deploying AI to process resident data — benefits records, social care data, safeguarding information — keeping that data within the UK is not just a security preference. It is the clearest route to compliance with UK GDPR's international transfer requirements, and the position most defensible to a DPO or ICO.

IN SHORT: UK GDPR does not prohibit processing outside the UK, but it imposes transfer obligations that are complex to satisfy. UK-only processing eliminates those obligations. For councils, UK-only data processing is the simplest and most defensible GDPR position.

Data processed through Arto is hosted on AWS London infrastructure. It never leaves the UK and is never used to train AI models. This means Arto deployments satisfy the UK GDPR data residency position described above by default, no international transfer mechanisms are required.

How Arto satisfies UK GDPR requirements for AI deployments

Two obligations remain with the deploying organisation regardless of platform: establishing the legal basis for processing and completing the DPIA. Arto provides the documentation framework, the Assurance Designer pre-populates the technical sections. The DPO's assessment and the senior responsible officer's sign-off remain with the organisation.

Arto satisfies the platform-level UK GDPR obligations for AI workflows: data never leaves the UK and is never used to train AI models, removing international transfer obligations. KSB profile controls restrict each AI agent's data access to what is necessary for the defined purpose. The audit trail records every data access operation with a timestamp, supporting subject access requests. The Assurance Designer pre-populates DPIA evidence for Arto Supported Flows.

Where to go from here

Get DPO sign-off on your deployment

The DPO approval guide sets out exactly what a DPO needs to approve an AI deployment and how to sequence the submission.

Getting DPO sign-offUnderstand data hosting and security

How Arto's UK-only hosting and ISO 27001 certification satisfy the technical security requirements alongside UK GDPR.

Data securitySee all four governance frameworks

How UK GDPR, ISO 42001, the OECD Principles and the AI Playbook work together as Arto's governance foundation.

AI Governance Standards