What is the AI governance foundation and why does it matter?

AI governance in the public sector is not primarily a compliance exercise. It is a structural question: what is your AI deployment built on?

A compliance approach to AI governance asks: which rules must we not break? It produces periodic audits, post-deployment reviews, and a checklist of prohibited actions. It is backward-looking. It tells you whether something that has already happened was permissible.

A foundation approach to AI governance asks: what principles and frameworks does this deployment stand on? It produces architecture decisions, platform choices, and operational structures that make safe, accountable AI possible before any workflow runs. It is forward-looking. It makes compliance a natural output rather than a separate layer.

The four frameworks that form the AI governance foundation for UK public sector, UK GDPR, ISO 42001, the OECD AI Principles, and the UK Government AI Playbook were not designed as a compliance checklist. They were designed as architecture: the structures, processes, and principles that make trustworthy AI possible at organisational scale. Building your AI deployments on these foundations means your organisation can deploy AI with confidence, defend its decisions when challenged, and demonstrate accountability to residents, elected members, and regulators.

IN SHORT: AI governance is an architectural question, not a compliance question. The four frameworks that form the foundation cover data protection, AI management systems, ethical principles, and operational standards. Together they give organisations the structure to deploy AI that is safe, accountable, and sustainable.

The frameworks UK public sector AI must be built on

UK GDPR Data protection

What it covers: How organisations process, store and protect personal data. Legal bases for processing. Individual rights. Data minimisation. International transfers. Data Protection Impact Assessments.

Why it matters for public sector AI: Every AI deployment in the public sector processes personal data about residents. UK GDPR defines the legal conditions under which that processing is lawful, the safeguards that must be in place, and the rights individuals retain over their data. There is no lawful AI deployment in local government that operates outside UK GDPR.

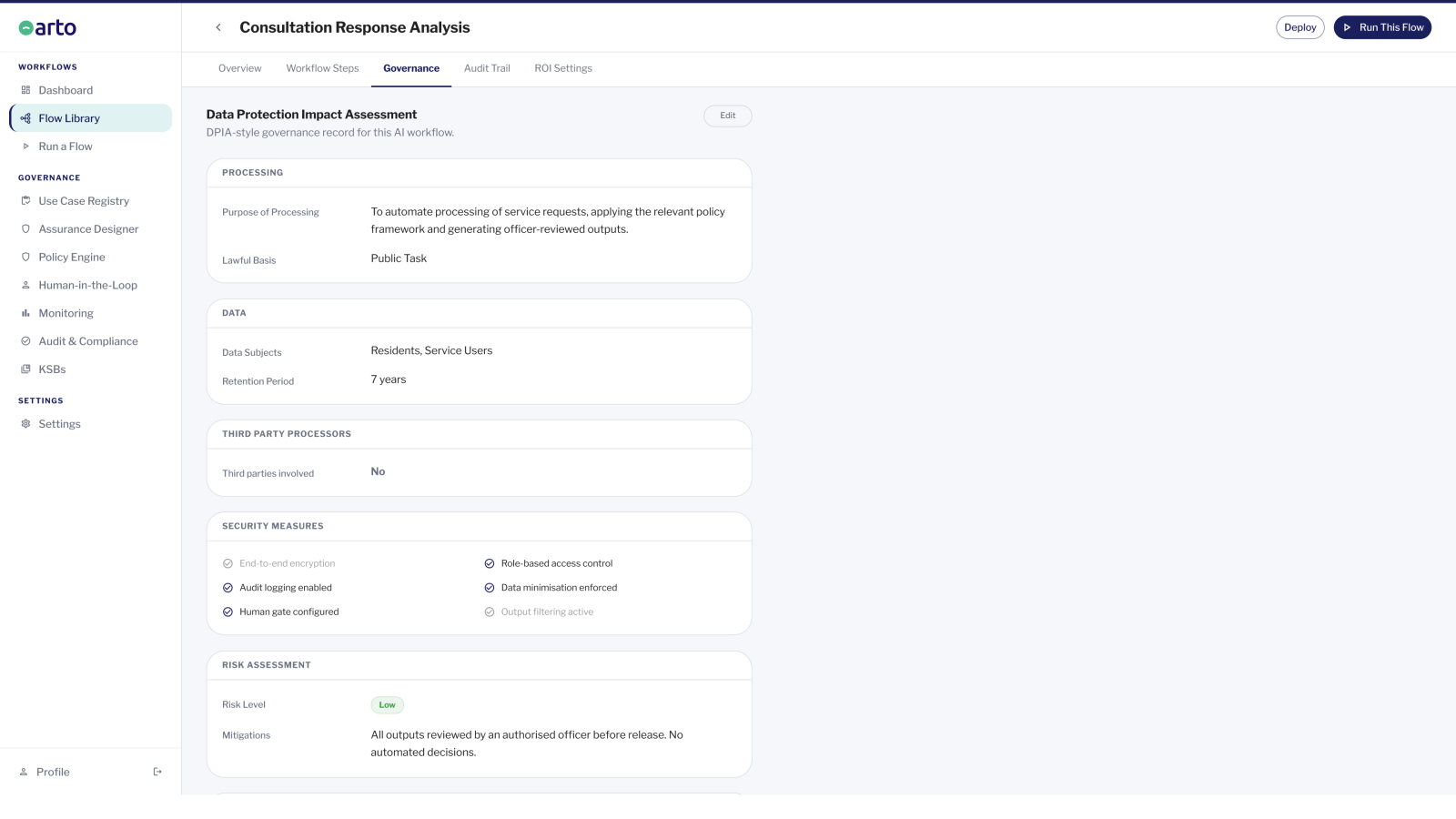

Arto implements this by: AWS London hosting (data never leaves the UK). No-training commitment (resident data not used to train AI models). Assurance Designer pre-populates Data Protection Impact Assessment (DPIA) supplementary evidence for every Arto Supported Flow. Audit trail searchable by individual for subject access requests.

ISO 42001 AI management systems

What it covers: The international standard for AI management systems. Governance structures, risk assessment, AI-specific policy requirements, human oversight, transparency, accountability, and continual improvement of AI systems.

Why it matters for public sector AI: ISO 42001 is the framework that specifically addresses AI as a management challenge — not data protection, not general IT security, but the governance of AI systems themselves. It defines what an AI management system looks like: the policies, processes, roles, and oversight mechanisms that make AI safe to deploy at organisational scale.

Arto implements this by: Human-in-the-loop gates enforce meaningful human oversight at every decision point. AI reasoning captured in the audit trail for every run. Assurance Designer pre-populates the AI management documentation. Pre-populated assurance case demonstrates alignment with the AI management framework.

OECD AI Principles Responsible AI at an international standard

What it covers: Five principles for responsible AI: inclusive growth and sustainable development; respect for the rule of law and human rights; transparency and explainability; robustness, security and safety; and accountability. Adopted by the UK Government and over 40 OECD member countries.

Why it matters for public sector AI: The OECD AI Principles provide the ethical and values-based dimension of AI governance that UK GDPR and ISO 42001 do not directly address. They define what AI that respects human rights, democratic values, and public trust looks like in practice. For public sector organisations accountable to residents and elected members, these principles are the ethical architecture of trustworthy AI.

Arto implements this by: KSB-mapped AI agents operate within defined professional role boundaries, respecting human autonomy and the limits of AI's appropriate scope. Transparent audit trail makes AI reasoning visible and explainable. HITL gates preserve human control over consequential decisions. The pre-populated Assurance Designer demonstrates accountability through the deployment record.

UK Government AI Playbook 10 principles for operational AI in government

What it covers: The UK Government's 10 operational principles for AI deployment: start with user needs, use appropriate data, be clear about AI's role, maintain human oversight, ensure appropriate security, be transparent, be fair, be accountable, be accessible, and continually review and improve.

Why it matters for public sector AI: The AI Playbook translates the higher-level frameworks into operational principles specifically designed for UK public sector contexts. It addresses the practical governance questions that arise when deploying AI in public services: what must officers be told about AI assistance, when must they be able to override AI recommendations, how must AI-assisted decisions be communicated to residents.

Arto implements this by: Pre-built workflows are designed around the specific operational contexts the Playbook principles address: human oversight enforced, AI role defined, audit transparency built in. The Assurance Designer maps workflow governance to the Playbook principles. The pre-populated Assurance Designer maps workflow governance to the Playbook principles.

WCAG 2.2 Accessible AI for all residents

Web Content Accessibility Guidelines (WCAG) 2.2 defines the accessibility standard that public sector digital services must meet. For AI deployments that affect how residents interact with council services, through digital self-service, automated responses, or AI-assisted communications, WCAG compliance ensures those services are accessible to residents with disabilities.

WCAG 2.2 is embedded at Arto's platform level, not added as a per-workflow configuration. Every resident-facing element of an Arto deployment meets WCAG 2.2 standards. Organisations do not need to configure or verify accessibility separately for each workflow, it is a structural property of the platform.

Accessibility in AI deployments has a specific dimension beyond the standard WCAG requirements: AI outputs that are ambiguous, unexplained, or inaccessible to residents with communication needs can create discrimination risks. The explainability and transparency requirements that run through the OECD AI Principles and the UK AI Playbook also serve accessibility, clear, plain-English outputs that residents can understand and challenge are both more accessible and more accountable.

How managing AI risk differs from managing AI compliance

Compliance and risk management sound similar but operate differently and produce different outcomes.

For AI in public sector, the difference is significant. A compliance-only approach to AI governance means your organisation demonstrates that it met the requirements at the point of assessment. A risk management approach means your organisation can demonstrate, for any specific decision, at any point in time, that governance was applied, that oversight was exercised, and that the process was appropriate. The audit trail is the risk management record. The governance certificate is the risk management confirmation. These are not compliance artefacts. They are operational evidence that the governance foundation is functioning.

-

Compliance asks:

does this deployment meet the current requirements? It is assessed at a point in time — typically before go-live and at periodic review points. It produces a binary answer: compliant or non-compliant. It is reactive. When requirements change, compliance must be re-assessed. When something goes wrong, compliance work begins.

-

Risk management asks:

what could go wrong, how likely is it, what would be the impact, and what have we done to reduce the probability and severity of harm? It is continuous. It does not produce a binary answer — it produces a risk register that evolves as the deployment operates. It is proactive. When something goes wrong, the risk register already contains the scenario and the response.

What happens when governance is not the foundation

AI deployments that do not start with a governance foundation tend to encounter the same set of problems, in a predictable sequence.

The deployment is blocked at approval stage

When the DPO, monitoring officer, or IT security team receives a proposal for AI deployment without a governance foundation, they cannot approve it. They cannot identify the lawful basis for processing. They cannot assess whether Article 22 applies. They cannot confirm that data will not leave the UK. They cannot verify that human oversight is built into the workflow. The project stalls, often indefinitely, while the governance case is assembled retrospectively, which is much harder than building it in from the start.

The deployment proceeds but creates legal exposure

If an AI deployment proceeds without appropriate governance, the organisation carries legal exposure from the first workflow execution. Every decision informed by AI without a recorded human oversight step is potentially vulnerable to challenge on public law grounds. Every resident data processing activity without a lawful basis is a potential UK GDPR breach. Every AI-assisted decision without a record of how it was reached is a potential subject access request that cannot be fulfilled.

A complaint triggers an investigation the organisation cannot defend

When a resident complains, or when a regulatory body investigates, the question is not whether the outcome was correct — it is whether the process was appropriate. An organisation that deployed AI without a governance foundation cannot demonstrate: what information the AI processed; what it recommended; who reviewed the recommendation; what the officer decided and why; or that the process was consistent across similar cases. An organisation with a governance foundation can answer all of these questions from the audit trail.

Ofsted, ICO, or the Local Government Ombudsman identifies a systemic failure

Regulators and inspectorates have become more sophisticated about AI in public services. Ofsted asks about AI use in children's services and specifically about consistency and oversight. The ICO investigates AI deployments that process personal data without appropriate safeguards. The LGO upholds complaints about AI-influenced decisions that residents cannot understand or challenge. An organisation without a governance foundation is exposed across all three regulatory environments.

How Arto embeds governance frameworks automatically

Most AI platforms treat governance as a layer that organisations add after deployment. They provide the AI capability; the organisation provides the governance. This means every deployment requires a separate governance exercise: assess the risks, configure the safeguards, document the process, obtain approval. When governance is added after the fact, it is incomplete, inconsistent, and expensive to maintain.

Arto was built the other way around. The frameworks are not features that organisations configure. They are the architecture of the platform. When an organisation deploys an Arto Supported Flow, the governance foundation is already in place. The DPIA supplementary evidence is pre-populated. The human oversight gates are pre-configured. The audit trail is automatically generated. The organisation reviews and confirms; it does not build from scratch.

How Arto embeds governance in every Arto Supported Flow

Every Arto Supported Flow is designed and built to align with key standards including ISO 27001, ISO 42001 and UK GDPR. An assurance case aligned to the 10 GDS AI Playbook principles is pre-populated and ready to deploy. Minimal configuration is required from the organisation.

These are not periodic audits. They are not optional configurations. They run on every single execution. Human oversight gates are pre-configured at decision points. The audit trail is automatically generated on every run: timestamped, immutable and exportable. The Assurance Designer pre-populates the documentation required for DPO review, DPIA supplementary evidence and IT security assessment.

This is what 'governance-first' means in practice. Not that governance is the most important feature of the platform, though it is, but that governance is prior. It is decided before any capability is deployed. It is structural, not configurational. It cannot be bypassed or forgotten. It is as much a part of how the platform works as the AI itself.

Data scope check - UK GDPR

What it verifies - The workflow only processed data within the KSB-defined scope for this workflow. No out-of-scope data was accessed.

Human oversight gate - ISO 42001 / UK AI Playbook

What it verifies - A named officer reviewed the AI output and recorded their decision before any action was taken. The officer's decision is attributed and timestamped.

Audit trail completeness - UK GDPR / ISO 42001

What it verifies - The full audit record for this run is complete: input, AI processing, output, officer review, and decision. Nothing is missing.

Governance framework alignment ISO 42001 / OECD Principles

What it verifies - The workflow execution followed the governance framework configured in the Assurance Designer for this workflow. No deviations from the specified process.

Transparency and explainability - OECD AI Principles / UK AI Playbook

What it verifies - The AI output is documented in a form that can be explained to the resident affected, to a regulatory body, or in legal proceedings. The reasoning chain is recorded.

Accessibility and communication - WCAG 2.2

What it verifies - Any output directed at residents meets WCAG 2.2 accessibility standards. Communications are clear, plain-English, and accessible to residents with disabilities.