This is not a theoretical requirement. Every public sector AI deployment is subject to scrutiny from the Information Commissioner's Office, internal audit, procurement assessors, elected members and the public. An organisation that cannot demonstrate how it governed an AI decision is exposed to challenge. An organisation that can demonstrate it has a structured, documented governance process for every deployment is in a significantly stronger position.

The steps below set out how to build that process. They apply to any AI deployment in any public sector context, whether you are introducing a single workflow or deploying AI across multiple service areas.

What applying AI governance actually means in practice

Governance in AI is often described in terms of principles: transparency, accountability, fairness, human oversight. These principles matter. But applying governance is an operational activity, not a philosophical one.

Governance applied in this way is not a compliance burden placed on top of a deployment. It is the operational framework within which the deployment runs. When it is built into the deployment from the start, it produces no additional workload. When it is added afterwards, it produces significant friction.

In practice, applying AI governance means the following:

Before a deployment begins:

establishing what data will be processed, what AI model will be used, what decisions the AI will be involved in, who is responsible for each decision, what oversight mechanisms are in place and what documentation will be maintained.

During a deployment:

maintaining an audit trail of every action taken, enforcing human oversight gates and applying the pre-populated governance controls for each deployment., enforcing human oversight gates at the points where decisions require officer approval, and generating a governance record for each run.

After a deployment:

reviewing the governance record against the original policy, identifying any deviations, updating the risk assessment and maintaining a complete historical record that is available for review at any time.

How to apply governance to an AI deployment: seven steps

Step 1Define the scope and purpose of the AI deploymentBefore any tool is selected or any data is connected, document what the deployment is for. Specify the service area, the problem being addressed, the type of decision the AI will be involved in and the expected output. This document becomes the baseline against which all subsequent governance assessments are made.

|

Step 2Identify the applicable compliance requirementsUsing the scope document from Step 1, identify which compliance requirements apply to this specific deployment. This includes the data protection lawful basis under UK GDPR, the DPIA requirement (mandatory for high-risk AI processing), the relevant WCAG accessibility requirements and the applicable principles from ISO 42001 and the UK Government AI Playbook.

|

Step 3Establish the accountability structureDefine who is responsible for each element of the deployment. Accountability in public sector AI does not transfer to the AI system or to the platform provider. Every decision must be attributable to a named officer. Document the accountability structure before deployment begins.

|

Step 4Configure and verify the governance controlsEnsure that the governance controls required by the compliance frameworks are in place and functioning correctly before any live data is processed. This includes audit trail capture, human-in-the-loop gates at the appropriate decision points, data minimisation controls and the compliance check configuration.

|

Step 5Complete the documentation for approvalAssemble the documentation required for internal approval. This typically includes the DPIA, the equality impact assessment, the IT security assessment, the information governance sign-off and the accountability structure document. Include the Assurance Designer record and audit trail from the test run as supplementary evidence.

|

Step 6Obtain approval and record the decisionSubmit the completed documentation to the relevant approval body. In most public sector organisations this will include the DPO, the IT security team, the information governance lead and, depending on the nature of the deployment, senior leadership or a scrutiny committee. Record the approval decision, who granted it, on what basis and on what date. This record must be preserved.

|

Step 7Maintain ongoing governance through the deployment lifecycleGovernance is not a one-time activity completed at the point of approval. The governance record must be maintained for the full operational life of the deployment. This means reviewing the audit trail regularly, updating the DPIA if the processing changes, reassessing compliance when the AI model or data sources change, and maintaining the accountability structure as personnel change.

|

Who is responsible for AI governance in a public sector organisation?

Governance responsibility in public sector AI is distributed across several roles. In smaller organisations some of these roles may be held by the same person. In larger organisations they may be held by different teams with different reporting lines. The important thing is not the organisational structure but that each responsibility is assigned to a named individual before deployment begins.

Understanding who owns each element of the process is essential before a deployment begins.

Senior Responsible Officer

Overall accountability for the deployment. Signs off the accountability structure. Has authority to pause or stop the deployment if governance requirements are not met. Accountable to leadership for the outcomes.

Data Protection Officer

Provides expert advice on UK GDPR requirements. Reviews and approves the DPIA. Advises on lawful basis, data minimisation and Article 22 obligations. Must be consulted before high-risk processing begins.

IT Security Lead

Assesses the technical security of the deployment. Reviews the AI model, data access controls, infrastructure security and incident response procedures. Provides security sign-off as part of the approval process.

Information Governance Lead

Ensures the deployment meets the organisation's information governance policies. Reviews data handling, retention and disposal requirements. Coordinates the information governance approval.

Service Lead

Defines the problem the deployment addresses. Provides operational context for the DPIA and compliance assessments. Champions the deployment through the approval process. Accountable for the service outcomes.

Approving Officer / Human in the Loop

Reviews AI outputs at the designated oversight gates before decisions are taken or actioned. Provides the digital sign-off recorded in the audit trail. Accountable for the decisions they approve.

How to get a governed AI deployment approved internally

The most common reason AI deployments stall in approval is not that the deployment is non-compliant. It is that the documentation provided to the approval body does not answer the questions the approval body has. Governance teams need specific evidence, not general assurances.

Presenting this evidence in a single, structured governance package reduces the number of approval cycles required. Each approval body receives the documentation it needs in the format it expects. Conditions and requirements are recorded and actioned before the next body reviews the submission.

Evidence requirements for each approval body

-

For the DPO:

a completed DPIA that covers the lawful basis, the data minimisation approach, the DSAR process for AI-processed data, and the accountability structure for AI-assisted decisions. Where the platform generates a governance certificate automatically, include it as supplementary evidence.

-

For IT security:

documentation of the AI model's data handling, the hosting environment (for Arto: AWS London, ISO 27001 certified), the encryption approach (in transit and at rest), the access control model and the incident response procedure.

-

For information governance:

the data flow map, the retention and disposal schedule for AI-processed data, and the governance certificate showing that data minimisation controls are enforced at the platform level.

-

For senior leadership or scrutiny committees:

the accountability structure document, the audit trail architecture, the human-in-the-loop gate configuration and, where available, evidence of measurable outcomes from comparable deployments.

What Arto handles automatically

The governance process described above is the correct approach to AI deployment in UK public sector. When followed, it produces compliant, auditable deployments that can withstand scrutiny from any approval body. The challenge is that it requires significant time, expertise and coordination to execute correctly.

Arto is built to carry the majority of that burden at the platform level.

Identifing compliance requirements (step 2) and establishing the accountability structure (step 3) are addressed by the Arto's platform architecture.Every workflow created on Arto inherits alignment to UK GDPR, ISO 42001, WCAG 2.2, the OECD AI Principles and the UK Government AI Playbook automatically. The compliance framework is not configured per deployment. It is the foundation on which every deployment runs. |

Configuring and verifying governance controls (step 4) is addressed by Arto's built-in governance controls.Arto Supported Flows are designed and built to align with key standards including ISO 27001, ISO 42001 and UK GDPR. Human-in-the-loop gates are enforced at the designated decision points. Data minimisation controls are applied at the platform level. A test environment allows every workflow to be verified before it touches live data. |

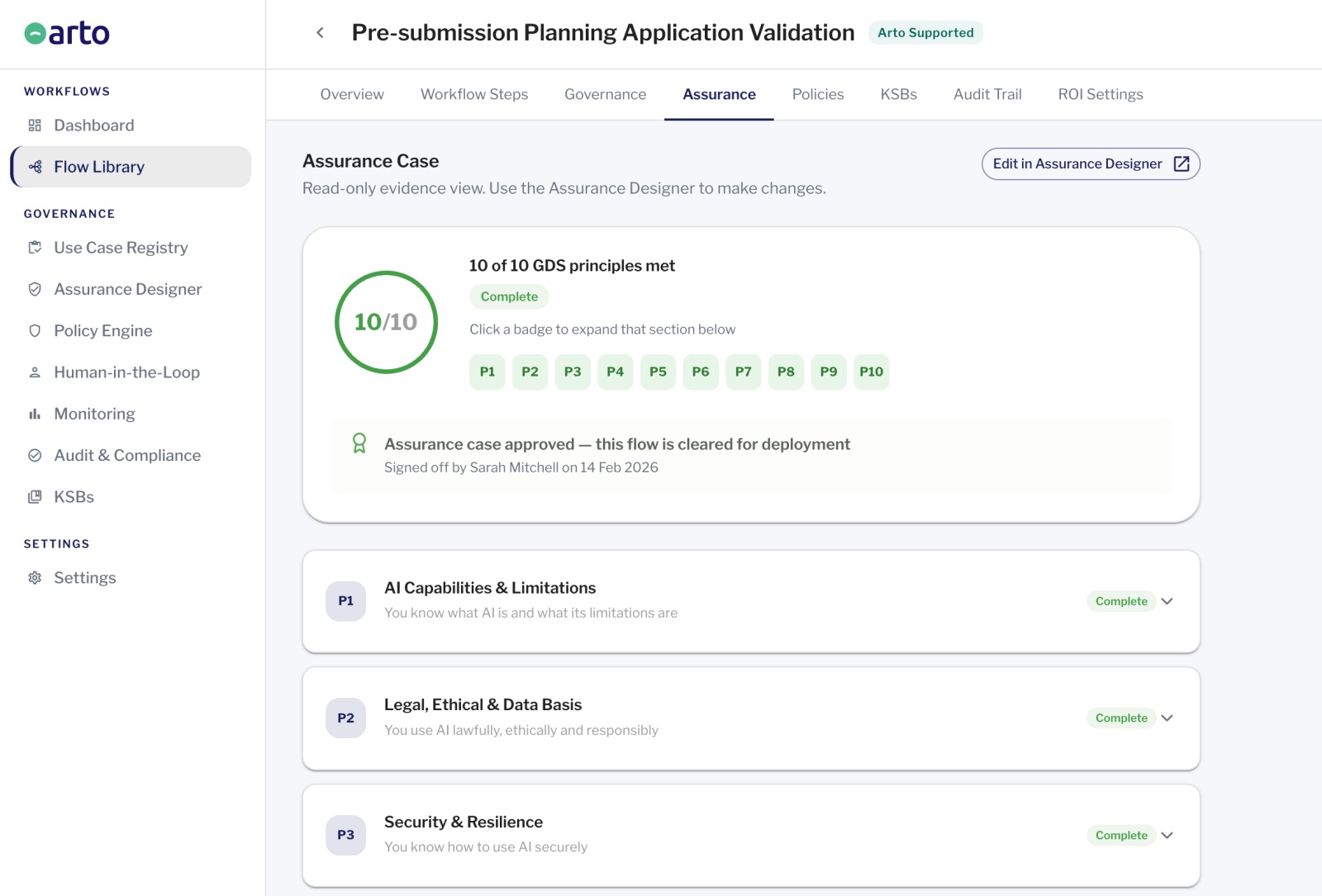

Completing the documentation for approval (step 5) is addressed by Arto's automatic documentation generation.The Assurance Designer pre-populates the documentation required for DPIA supplementary evidence, IT security assessment and information governance sign-off. The audit trail captures every action from trigger to output. These documents constitute the primary evidence required for DPIA supplementary evidence, IT security assessment and information governance sign-off. |

Obtaining approval and recording the decision (step 6) is supported by Arto's exportable governance record.The governance certificate and audit trail can be exported for DPO review, IT security assessment, information governance approval or scrutiny committee presentation in the format each body requires. |

Maintaining governance through the deployment lifecycle (step 7) is addressed by Arto's ongoing governance infrastructure.The audit trail is maintained continuously and automatically. The accountability record is preserved permanently. There is no separate maintenance workload. |

Scope definition and accountability structure (steps 1 and 3) remain the responsibility of the organisation. No platform can define the problem you are trying to solve or assign accountability to the officers within your organisation. Arto provides the governance infrastructure. The decisions about what to deploy and who is accountable for it remain with the people responsible for the service.