Getting AI approved internally in a public sector organisation is primarily a documentation and sequencing challenge, not a technology challenge. The approval bodies, DPO, IT security, information governance and senior leadership, are not opposed to AI. They are responsible for protecting the organisation from risk. Give them the evidence they need, in the format they expect, before they ask for it, and approval becomes straightforward.

Most AI deployments stall not because they are non-compliant but because the evidence of compliance is not ready when the approval conversation begins. A DPO who receives a well-prepared DPIA, a completed data flow map and a clear accountability structure has very little left to assess. A DPO who receives a request to approve an AI tool without that documentation has every reason to pause the project until it is ready.

This page sets out who the approval bodies are, what each one needs, the order in which to engage them, the objections you are likely to face and how to address them, and what a well-prepared approval submission looks like in practice.

Why AI approval stalls in local government

AI approval stalls in local government for three structural reasons, and they almost always combine.

Documentation is assembled in response to questions rather than in advance

The most common pattern: a service lead requests approval, the approval body asks for specific evidence, the service lead returns to assemble that evidence, the approval body raises further questions when the evidence arrives, the project waits through multiple cycles. Each cycle can take weeks. The total delay is not caused by opposition — it is caused by the sequential nature of reactive documentation assembly.

Approval bodies are engaged in the wrong order

Information governance, IT security and the DPO each have distinct questions. Some of those questions depend on answers from other bodies, for example, the IT security assessment informs part of the DPIA. When these bodies are approached simultaneously or in the wrong sequence, the process generates conflicting feedback and creates rework. The correct sequence is: scope definition, information governance, IT security, DPO, then senior leadership or scrutiny. Deviating from this order typically extends the process.

The deployment was not designed with approval in mind

Approval processes exist to assess risk. If the deployment was designed without governance in mind, then the approval process discovers governance gaps rather than confirming their absence. Each gap requires either remediation (which takes time) or a risk-acceptance decision (which requires additional justification). Deployments designed governance-first, where compliance is embedded before any data is processed, move through approval significantly faster because there are fewer gaps to discover.

Approval does not slow AI down. Discovering governance gaps during approval slows AI down. The fix is not to bypass approval, it is to design deployments that have no gaps to discover.

Who approves AI in a public sector organisation and what they need

In most public sector organisations, an AI deployment requires approval from four bodies. They have different responsibilities, different questions and different evidence requirements. Understanding what each one needs before you approach them is the single most effective way to accelerate the approval process.

Data Protection Officer (DPO)

What they need: A completed Data Protection Impact Assessment (DPIA) covering the lawful basis for processing, the data minimisation approach, the data flow map, the retention and disposal schedule, the rights of data subjects including Article 22 automated decision-making provisions, and the accountability structure for AI-assisted decisions.

They will ask: Is personal data being processed? What is the lawful basis? Who is the data processor — is it the platform provider or the council? Does data leave the UK? Could this be classed as high-risk processing under Article 35? What happens if a resident requests an explanation of an AI-assisted decision?

Arto provides: A pre-populated DPIA evidence base for every Arto Supported Flow. The governance certificate showing data minimisation controls enforced at platform level. Confirmation that data never leaves the UK and is never used to train AI models. AWS London hosting documentation. ISO 27001 certification.

IT Security Lead

What they need: Documentation of the AI platform's hosting environment, data encryption approach (in transit and at rest), access control model, incident response procedure, penetration testing history and third-party security certifications. They need to be satisfied that the platform does not introduce unacceptable security risk to council systems.

They will ask: Where is the data hosted? Is it UK-only? What certifications does the platform hold? How is access to the AI system controlled? What happens in the event of a data breach? Does the platform integrate with our existing systems and if so, what access does it require?

Arto provides: AWS London hosting environment documentation. ISO 27001 certification. Data encryption details. Access control architecture. The fact that Arto data never leaves the UK and is not used to train AI models. Integration architecture documentation for any back-office system connections.

Information Governance Lead

What they need: Confirmation that the deployment meets the organisation's information governance policies. This typically means a data flow map showing where data originates, how it is processed, where it is stored and when it is disposed of. They also need to know whether any new data sharing agreements are required with third parties.

They will ask: Does this create any new data flows we need to record? Do we need to update our Records of Processing Activities? Is a data sharing agreement required with the platform provider? How long is data retained and how is it disposed of?

Arto provides: The data flow map for the specific workflow. Confirmation of processing arrangements. The governance certificate showing which data was accessed in each run. Retention and disposal information. Data processing agreement support.

Senior Leadership or Scrutiny Committee

What they need: An accessible summary of what the deployment does, who approved it at an operational level, what governance is in place, what the expected outcome is and — where possible — evidence from comparable deployments. Leadership needs to be able to defend the decision publicly if challenged.

They will ask: What does this AI system actually do? Who is accountable if something goes wrong? How do we know it is compliant? What measurable benefit will this deliver? Have other councils done this? Can we see the results?

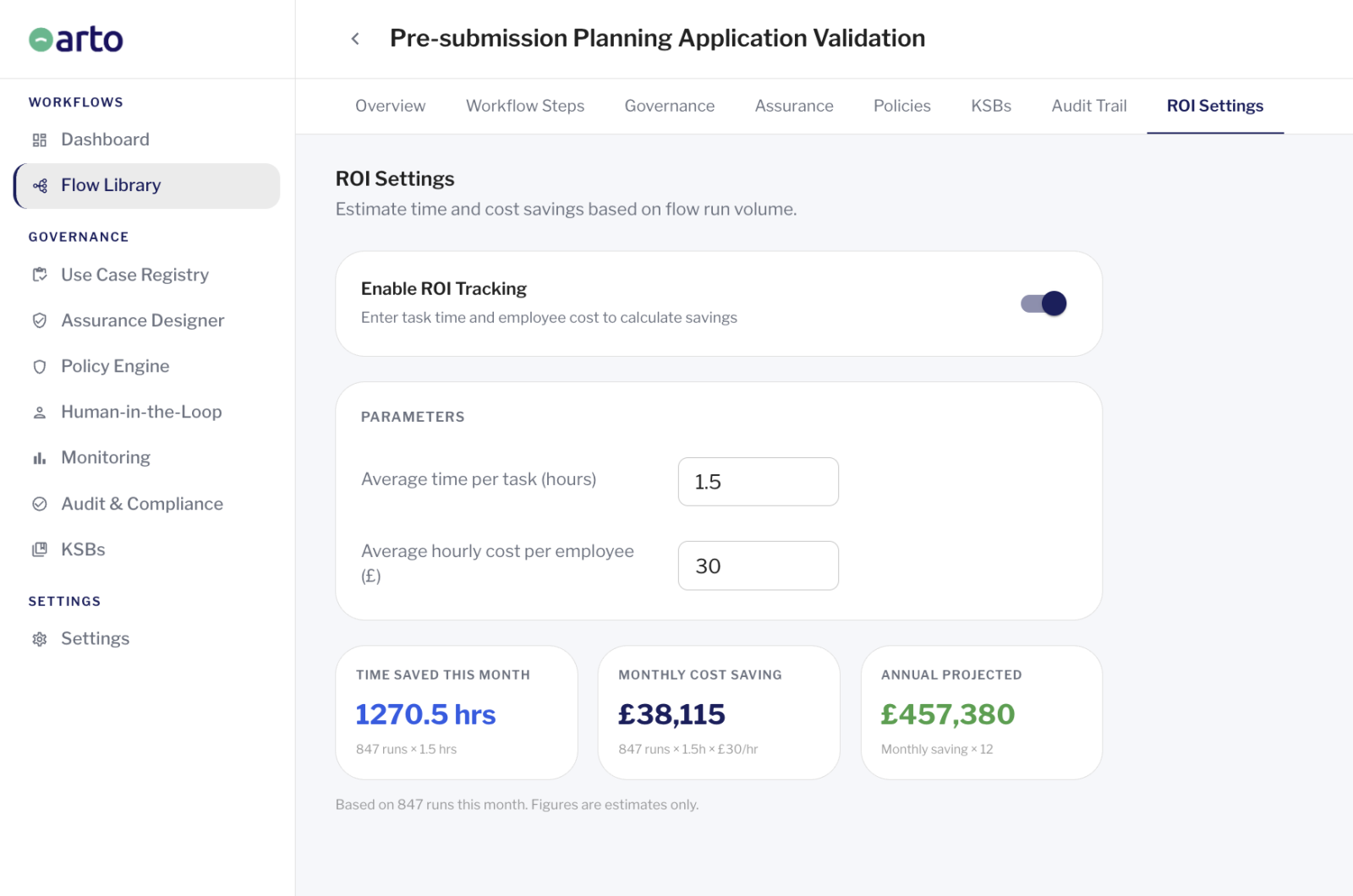

Arto provides: The Assurance Designer summary for the workflow, providing an accessible governance overview. The Redcar and Cleveland Council contact centre case study showing a 28% reduction in contact centre demand within three months. ROI dashboard evidence from the first deployment period.

The correct order for engaging approval bodies

Approaching approval bodies simultaneously or in the wrong order is one of the most common causes of extended approval timelines. Each body's questions depend partly on what other bodies have already confirmed, and presenting an incomplete picture to any one body creates feedback loops that extend the process.

The correct sequence is as follows:

Step 1 - Scope definition (before any external engagement)

Before approaching any approval body, document the deployment precisely: what problem it addresses, what data it will process, what AI model it uses, what decisions it will inform or make, who is accountable for each decision and what the human oversight mechanism is. This document is the foundation for every subsequent approval conversation. Without it, approval bodies cannot assess risk accurately, and each body's questions will generate new documentation requests.

Step 2 - Information governance review

Submit the scope document to the information governance lead first. Their primary question is whether new data flows are created and whether new processing activities need to be recorded. This step is quick when the scope is well-defined and typically produces a confirmed data flow map and an updated Records of Processing Activities entry, both of which are inputs to the DPIA.

Step 3 - IT security assessment

Once the data flow is confirmed, submit to the IT security lead. Their assessment depends on knowing exactly what data is being processed, where it is going and what external systems are involved — all of which the information governance review has now established. The IT security assessment typically produces confirmation of the hosting environment and any conditions on the integration approach, which the DPO needs to complete their assessment.

Step 4 - Data Protection Officer

The DPO assessment comes fourth because it depends on the outputs of both the information governance review (data flow map, ROPA update) and the IT security assessment (hosting confirmation, integration conditions). Presenting a DPIA that already incorporates these outputs reduces the DPO's questions significantly and typically halves the number of assessment cycles required.

Step 5 - Senior leadership or scrutiny

With DPO sign-off confirmed, the submission to senior leadership is straightforward. The governance package is complete. The compliance questions have been answered by qualified experts. The presentation to leadership can focus on the purpose, the expected outcome and the governance assurance, not on defending the compliance approach.

IN SHORT: Engage information governance first, then IT security, then the DPO, then leadership. Each step produces outputs that the next step requires. Running them in sequence is faster than running them in parallel.

The objections you will face in the approval process

These are the six objections that arise most frequently in public sector AI approval conversations. Each one is addressable. None requires the deployment to be paused or redesigned if governance is built in from the start.

We are not sure this is GDPR-compliant.

The lawful basis for processing is public task under Article 6(1)(e) of UK GDPR. A DPIA has been completed covering the processing scope, data minimisation approach and data subject rights. The platform hosts data on AWS London, processes no data outside the UK and does not use council data to train AI models. The DPO can review the completed DPIA and supporting documentation.

We do not know who is accountable if the AI gets something wrong.

Accountability remains with the organisation and the named officer responsible for each AI-assisted decision. The AI does not make decisions, it produces outputs that an officer reviews and approves. Every approval is recorded in the audit trail with a timestamp and officer attribution. The accountability structure document sets out who is responsible for each element of the deployment.

We cannot explain how the AI makes its decisions.

Every workflow run generates an immutable audit record capturing the trigger, the data accessed, the AI reasoning, the human oversight gate decisions and the output produced. That record is available immediately for review. The AI reasoning is captured in a form that can be explained to a data subject or a scrutiny committee.

We are worried about data leaving the UK.

Data processed through this platform is hosted on AWS London infrastructure. It never leaves the UK and is never used to train AI models. The platform is ISO 27001 certified. These commitments are documented in the data processing agreement and can be confirmed with the platform's data security team.

We need to see this go to scrutiny committee before we can proceed.

This is a reasonable requirement for a first AI deployment. The governance package — including the DPIA, accountability structure, audit trail architecture and DPO sign-off — provides everything a scrutiny committee needs to assess the deployment. If comparable deployment results are available, these should be included. The committee's questions will typically focus on accountability, transparency and outcomes rather than technical detail.

We do not have a corporate AI policy yet. Can we deploy without one?

Many councils are deploying AI before a corporate AI policy is in place. The absence of a corporate policy does not prevent a specific deployment from being approved, provided that deployment has its own governance framework: defined scope, established accountability, compliance with applicable standards and a functioning audit trail. The deployment itself then becomes evidence to inform the eventual corporate policy.

What a complete AI approval submission looks like

A complete AI approval submission presents the evidence that every approval body needs, assembled in a single structured package, before any approval conversation begins. The goal is to eliminate the question-and-answer cycle that extends most approval timelines.

Presenting this package before the first approval conversation, rather than assembling it reactively in response to questions, is the single most effective way to reduce approval timelines. It signals to approval bodies that the deployment has been designed carefully and that their questions have been anticipated. It typically reduces the number of approval cycles from three or four to one.

A complete submission for a standard AI workflow deployment in public sector should contain the following:

-

Deployment scope document:

what the workflow does, what problem it addresses, what data it processes, what decisions it informs, who is accountable and what the human oversight mechanism is.

-

Data flow map:

where data originates, how it is processed in the workflow, where it is stored, when it is disposed of and whether any third-party processors are involved.

-

Records of Processing Activities update:

the new processing activity recorded in the ROPA before submission to the DPO.

-

Data Protection Impact Assessment:

covering lawful basis, data minimisation, data subject rights (including Article 22), risk assessment and risk mitigation measures.

-

Equality impact assessment:

confirming that the workflow does not introduce discriminatory outcomes for protected characteristic groups.

-

IT security assessment:

confirmation of hosting environment, encryption, access controls and incident response procedure.

-

Accountability structure document:

named individuals responsible for each element of the deployment, including the senior responsible officer and approving officers for human oversight gates.

-

Governance certificate from a test run:

evidence that the compliance framework is functioning as specified before live data is processed.

-

Comparable deployment evidence:

where available, evidence of measurable outcomes from comparable deployments in other councils.

How Arto reduces the approval burden

The approval process described above requires significant documentation, taking weeks of internal coordination.. Arto reduces the documentation burden substantially at the platform level.

- For the DPO: the Assurance Designer pre-populates DPIA supplementary evidence; the governance certificate confirms data minimisation, oversight gates and compliance checks are functioning.

- For IT security: AWS London hosting, ISO 27001 certification and data residency commitments are documented and available for the security assessment submission.

- For information governance: the audit trail provides data flow evidence for ROPA updates; data processing and retention documentation is available from the platform.

- For senior leadership: the ROI dashboard provides measurable outcome evidence; the Assurance Designer summary provides a governance overview suitable for scrutiny presentation.

- For the submission as a whole: the governance certificate and audit trail from a test run can be included as evidence the compliance framework was tested before live data was processed.

Scope definition and the equality impact assessment remain the organisation's responsibility.

Where to go from here

Assembling the documentation

If you are preparing your first approval submission, the step-by-step governance guide covers every document in detail.

How to apply AI governanceCurrently stalled in approval

If a deployment is already in the approval process and facing the issues described above, speak with the Arto team. We can help identify the specific gap and provide the documentation needed to move it forward.

Talk to the teamReady to see Arto in practice

If you want to see how Arto's governance package holds up in your specific approval context, book a governance review.

Book a governance review