What is the UK government AI playbook?

The UK Government AI Playbook is published by the Cabinet Office, through Government Digital Service (GDS), and sets out guidance for responsible AI use across UK public services. It applies to central government departments, local authorities, NHS trusts and other public bodies. It was developed to give public sector organisations a practical framework for deploying AI safely, transparently and accountably.

The Playbook contains 10 principles. Each principle addresses a different aspect of responsible AI practice, from how you assess whether AI is the right solution to how you maintain oversight of AI in operation. Taken together, they describe what responsible AI deployment looks like for a UK public sector organisation.

The Playbook is not legislation. Compliance is not legally required. However, it represents the expected standard of practice for any public sector organisation deploying AI, and its principles are increasingly referenced in procurement frameworks, internal audit requirements and AI governance assessments. Demonstrating alignment with the Playbook is the clearest way to show that an AI deployment meets the standard of care expected of a public body.

Relationship to other frameworks

The Playbook draws directly from the OECD AI Principles and the DSIT AI framework. It is the operational expression of both, translating the international ethical baseline into practical guidance for UK public services. If you understand the OECD principles, you will recognise the same values running through all 10 Playbook principles. If your AI deployment satisfies the Playbook, it also demonstrates strong alignment with ISO 42001 and the OECD framework.

The 10 principles of the UK Government AI Playbook

Each principle is explained below in plain language, with a note on what it requires from a council deploying AI and how Arto's governance framework satisfies it by default.

PRINCIPLE 1 Understand what AI is and isn't

Before deploying AI, ensure the people responsible for the deployment genuinely understand what the system does, what its limitations are, and where it can and cannot reliably be used. This principle guards against deploying AI in situations where the technology is unsuited to the task or where the responsible team lacks the understanding to oversee it properly.

What this means for your council: Service leads and governance teams must be able to articulate what the AI system does, what data it uses, what its known limitations are, and what happens when it is wrong. This should be documented before deployment, not assumed.

How Arto satisfies this: Arto's KSB profile for each workflow defines precisely what the AI agent does and does not do. The Assurance Designer requires the deploying team to document the system's scope, limitations and intended use before the workflow is activated. This documentation satisfies Principle 1's requirement for informed deployment.

PRINCIPLE 2 Ensure AI is used for appropriate purposes

AI should be deployed to address a specific, well-defined need. Councils should be able to explain why AI is the right solution for this particular problem rather than a non-AI alternative, and should document that reasoning. This principle prevents the deployment of AI for its own sake or in contexts where simpler approaches would be more reliable.

What this means for your council: Before deploying any AI workflow, confirm that the problem being addressed is specific and measurable, that the AI approach is proportionate to the problem, and that the decision to use AI over a non-AI alternative is documented. This documentation should form part of the governance approval submission.

How Arto satisfies this: Arto's workflow design process begins with a specific, defined service problem. The Assurance Designer includes a documented rationale for AI use, which satisfies Principle 2's requirement for an evidenced purpose assessment.

PRINCIPLE 3 Be transparent about the use of AI

Where AI is used to inform or make decisions that affect individuals, those individuals should be informed that AI is involved. Public bodies have a duty to be open about how decisions are made on behalf of the public they serve. Transparency about AI use also helps build public trust in the decisions being made.

What this means for your council: Councils must be able to tell residents, staff and elected members which processes involve AI and what role the AI plays in those processes. Where AI informs decisions about individuals, benefits, housing, planning, there should be a clear, accessible way for that to be communicated. Privacy notices and service information should reflect AI use.

How Arto satisfies this: Arto's audit trail records AI involvement in every decision. The audit trail provides a timestamped record of every AI output and the human decision made in response. This audit infrastructure supports the transparency communications that Principle 3 requires.

PRINCIPLE 4 Take responsibility for AI outcomes

AI cannot be accountable — only the humans and organisations that deploy it can be. This principle requires public bodies to assign clear accountability for every AI deployment and every AI-assisted decision. There must be a named individual responsible for each deployment, and a named officer responsible for each decision the AI informs.

What this means for your council: Every AI deployment in a council must have a named senior responsible officer. Every decision informed by AI must have a named officer who reviewed the AI output and made the decision. Both must be documented. Diffusing accountability, 'the AI decided', is not acceptable in public sector.

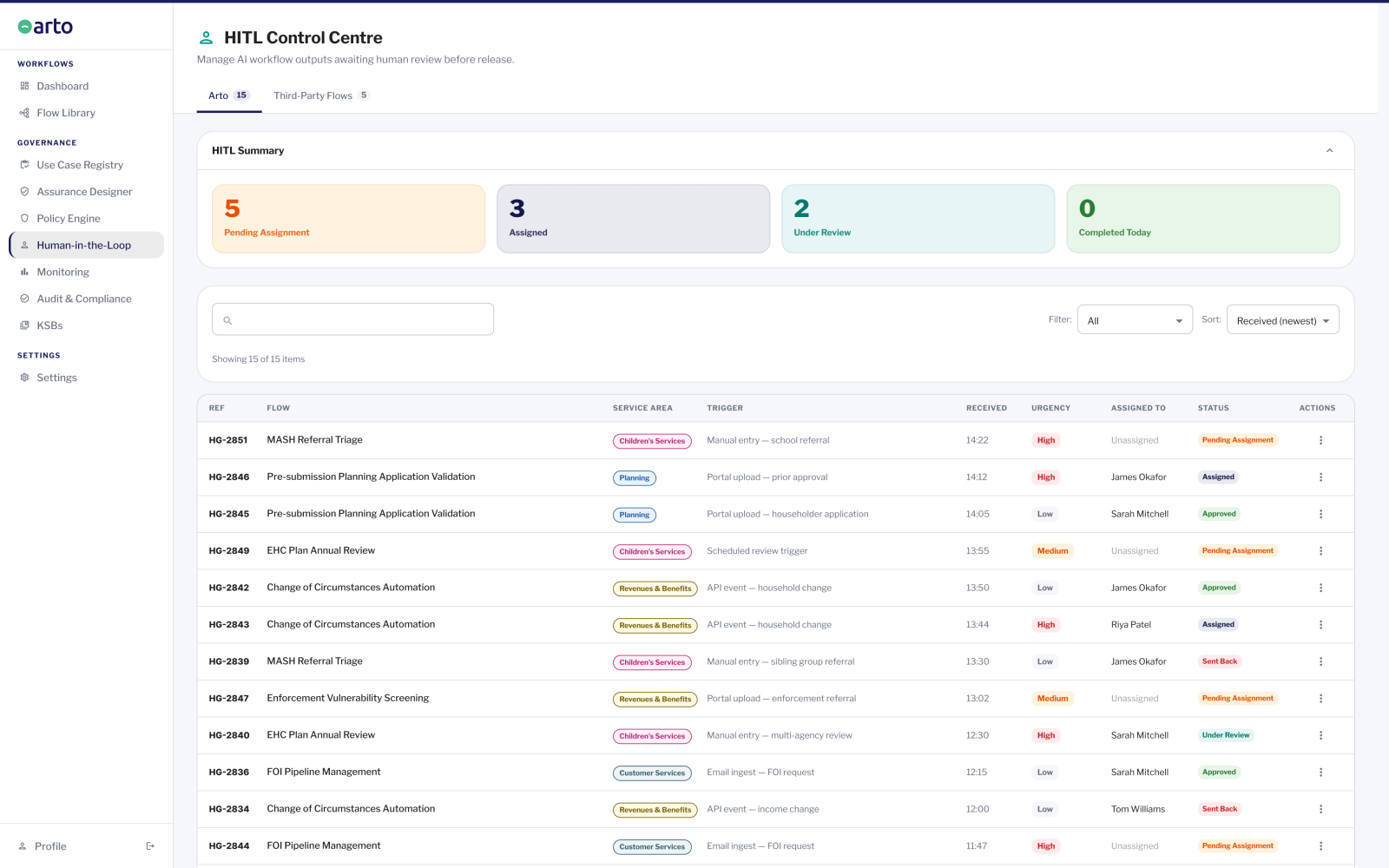

How Arto satisfies this: Arto's HITL Control Centre assigns every AI output to a named officer for review and decision. That officer's sign-off is permanently recorded in the audit trail alongside their name and the timestamp. The accountability structure is documented in the Assurance Designer before deployment begins. Principle 4's accountability requirement is structurally enforced by the platform.

PRINCIPLE 5 Make sure AI is safe

AI systems must be tested, monitored and managed to ensure they operate safely and reliably. This includes testing before deployment, ongoing monitoring during operation, and a clear process for identifying and responding to failures. AI that produces harmful, incorrect or biased outputs must be identified and addressed promptly.

What this means for your council: Councils must test AI systems before live deployment, monitor their outputs in operation, and have a defined process for what happens when the AI produces an incorrect or potentially harmful output. This process should be documented and the responsible team should be briefed on it.

How Arto satisfies this: Arto Supported Flows are tested before live activation. The Monitoring dashboard surfaces run outcomes, anomalies and active alerts in real time. The HITL Control Centre ensures that outputs with potential for harm are reviewed by a qualified officer before being acted upon. The workflow can be paused immediately if safety concerns arise.

PRINCIPLE 6 Ensure AI is secure

AI systems must be protected against unauthorised access, manipulation and data breaches. This includes the security of the AI model itself, the data it processes, and the systems it connects to. Public sector organisations hold sensitive data about residents and must ensure that data cannot be compromised through their AI deployments.

What this means for your council: Councils must ensure that any AI platform they use meets the security standards expected of public sector systems. This includes understanding where data is hosted, how it is encrypted, who can access it and what the incident response process is. Security should be assessed as part of the IT security review before deployment.

How Arto satisfies this: Arto is hosted on AWS London infrastructure, ISO 27001 certified, with data encrypted in transit and at rest. Data processed through Arto never leaves the UK and is never used to train AI models. Access controls restrict system access to authorised users. Security documentation is available for IT security assessment.

PRINCIPLE 7 Define the problem before seeking an AI solution

This principle reinforces Principle 2 from a different direction: the starting point should always be a clearly articulated problem, not a technology. Teams should resist the temptation to adopt AI because it is available or fashionable and should instead work backwards from the specific outcome they need to achieve.

What this means for your council: Councils should begin every AI deployment discussion with a problem statement, not a technology specification. The problem statement should include the current state, the desired state and a measurable definition of success. This statement should be approved by the responsible service lead before any AI product evaluation begins.

How Arto satisfies this: Arto's deployment model is explicitly problem-first. Each Arto Supported Flow is built around a specific, documented public sector service problem with measurable outcomes. The ROI dashboard measures performance against the original problem definition from the first run.

PRINCIPLE 8 Assess risks before deploying AI

A structured risk assessment must be completed before any AI deployment, covering data risks, decision-making risks, operational risks and reputational risks. For public sector organisations, this typically means completing a Data Protection Impact Assessment, an equality impact assessment and an AI-specific risk review. These assessments should be completed before live data is processed.

What this means for your council: Councils must complete documented risk assessments for every AI deployment. The DPIA is the minimum requirement where personal data is processed. Equality impact, operational risk and reputational risk should also be assessed. These assessments should be part of the standard pre-deployment approval process.

How Arto satisfies this: Arto's Assurance Designer pre-populates the evidence base for DPIA supplementary documentation, equality considerations and operational risk. For Arto Supported Flows, the majority of the risk assessment documentation is pre-completed. The test run audit trail confirms that the governance framework is functioning before live data is processed.

PRINCIPLE 9 Maintain human oversight of AI

AI must not be permitted to make consequential decisions about individuals or services without human review and approval. Public sector organisations have a duty of care to the people they serve that cannot be delegated to an automated system. Where AI informs decisions, a qualified human must review the AI output and make the final determination.

What this means for your council: Every AI-assisted decision in a council that affects an individual, whether on housing, benefits, social care, planning or any other service, must be reviewed and approved by a qualified officer before it is acted upon. The officer's decision must be recorded. 'The system decided' is not a valid defence in a challenge or complaint.

How Arto satisfies this: Arto's HITL Control Centre enforces human oversight at defined decision points in every workflow. The workflow cannot proceed past a HITL gate without officer review and sign-off. Every sign-off is permanently attributed to a named officer with a timestamp. Principle 9 is structurally enforced, it is not optional or configurable.

PRINCIPLE 10 Review AI applications regularly

AI deployments are not set-and-forget. The AI model, the data it uses, the decisions it informs and the context in which it operates all change over time. Public sector organisations must review their AI deployments regularly to ensure they continue to perform as intended, remain compliant with applicable standards, and continue to serve the purpose for which they were deployed.

What this means for your council: Councils must establish a review schedule for every AI deployment. The review should cover: is the system still performing as intended? Has the data or the context changed? Has the compliance framework changed? Is the accountability structure still valid? These reviews should be documented and the outcomes recorded.

How Arto satisfies this: Arto's Monitoring dashboard provides real-time performance data for ongoing review. The organisation governance score reflects the current completion state of assurance records across all active flows. The Assurance Designer maintains a structured review record. The audit trail provides the performance data needed for periodic reviews.

Is the UK Government AI Playbook mandatory?

The UK Government AI Playbook is guidance, not legislation. Compliance is not legally required in the way that UK GDPR compliance is legally required.

However, the Playbook's status as guidance does not make it optional in practice. The Cabinet Office has published it as the expected standard of responsible AI practice for UK public services. It is increasingly referenced in central government procurement frameworks, supplier assessments and internal audit standards. Councils that cannot demonstrate alignment with the Playbook are likely to face increasing difficulty in procurement and audit contexts as AI governance expectations mature.

The more useful question is not 'must we comply?' but 'what happens if we deploy AI without considering these principles?' The answer: deployments that ignore the Playbook's requirements on transparency, accountability, human oversight and safety are at meaningful risk of public challenge, ICO inquiry or reputational damage. The principles are not arbitrary, they exist because real harms have occurred in AI deployments that lacked them.

The UK Government's direction of travel is towards AI governance being more formally regulated, not less. The Playbook's principles are likely to inform future statutory requirements. Organisations that are already aligned will be better positioned when those requirements arrive.

IN SHORT: The Playbook is guidance, not law. But treating it as optional carries real procurement and reputational risk. The principles represent the expected standard of practice for any public body deploying AI.

How the AI Playbook relates to OECD, DSIT and ISO 42001

The UK Government AI Playbook does not exist in isolation. It sits within a layered framework of international and domestic AI governance standards that are related by design.

The OECD AI Principles, published in 2019 and adopted by the UK government, established the international ethical baseline for AI: inclusive growth, human-centred values, transparency, robustness and accountability. These five principles are the foundation.

The DSIT AI Principles are the UK's domestic adaptation of the OECD framework. They translate the international principles into the UK regulatory and public sector context. The Playbook's 10 operational principles are the practical expression of the DSIT framework, they tell practitioners what to do, not just what to value.

ISO 42001, the international standard for AI management systems, addresses many of the same requirements as the Playbook from a technical and process perspective. Where the Playbook focuses on values and principles, ISO 42001 provides the management system framework for implementing them. The two frameworks are complementary: a council that satisfies ISO 42001 will find the Playbook's operational requirements substantially covered.

For a council deploying AI, understanding this lineage matters because it means satisfying the Playbook is not an isolated exercise, it is part of a consistent, internationally aligned approach to responsible AI that the UK public sector is converging on.

How Arto implements the AI Playbook principles by default

Arto's architecture including KSB role profiles, the HITL Control Centre, the Assurance Designer, the Monitoring dashboard and the audit trail, directly supports the Playbook's requirements across all 10 principles. For Arto Supported Flows, the deploying council inherits Playbook alignment automatically.

Three principles require human judgement that no platform can substitute for: understanding what the AI does, ensuring it is used for an appropriate purpose, and defining the problem before seeking a solution. For those, the Assurance Designer provides the structured documentation framework. The judgement and accountability remain with the council.

Where to go from here

See all four governance frameworks

The AI Standards Hub explains how UK GDPR, ISO 42001, the OECD Principles and the AI Playbook work together.

AI Governance StandardsSee how governance works in practice

The AI Governance Foundation page explains how Arto embeds all four frameworks and what it produces for your approval bodies.

The AI Governance FoundationDiscuss your governance requirements

Speak with the Arto team to understand how the platform satisfies the Playbook for your specific service area and deployment.Book a governance review

Book a governance review