ISO 42001 is the international standard for AI management systems, published by the International Organization for Standardization in December 2023. It provides organisations with a structured framework for governing AI responsibly — covering AI risk assessment, human oversight, transparency of AI systems and a continual improvement cycle. It is the first international standard dedicated specifically to AI management, as distinct from the broader information security standards that preceded it.

For UK public sector organisations deploying AI, ISO 42001 is the primary technical standard for demonstrating that AI is being managed safely and accountably. It is increasingly referenced in procurement assessments, internal audit requirements and governance frameworks — including the UK Government AI Playbook, which draws on the same principles.

ISO 42001 is not yet a legal requirement. Compliance is not mandated by legislation. However, it represents the established international benchmark for AI management practice, and organisations that can demonstrate ISO 42001 alignment are significantly better positioned in procurement, in regulatory conversations and in public accountability contexts.

IN SHORT: ISO 42001 is the international AI management standard. It covers what ISO 27001 does not: AI-specific risk assessment, oversight and governance. For public sector AI deployments, alignment with ISO 42001 is the clearest way to demonstrate that AI is being managed safely and accountably.

ISO 27001 and ISO 42001: what is the difference?

The short answer: ISO 27001 covers how you protect information. ISO 42001 covers how you govern AI. Both apply to an organisation deploying AI — they address different dimensions of the same deployment.

ISO 27001 was not designed with AI in mind. It covers information security management — protecting data from unauthorised access, ensuring confidentiality, integrity and availability. It does not address how an AI system is scoped and defined, how risks specific to AI (such as model drift, biased outputs or opaque reasoning) are managed, how human oversight is enforced, or how the governance of AI decisions is documented and maintained.

ISO 42001 was designed specifically for AI. It addresses the governance and management requirements that AI systems introduce that information security standards do not cover. Organisations that have ISO 27001 certification still need to consider ISO 42001 for their AI deployments — the two standards are complementary, not alternatives.

Dimension | ISO 27001 | ISO 42001 |

What it covers | Information security management — protecting data from unauthorised access, ensuring confidentiality, integrity and availability | AI management systems — governing AI responsibly, including AI-specific risk, human oversight, transparency and continual improvement |

What it was designed for | Traditional IT systems and data processing. Predates the widespread deployment of AI as a decision-support or automation tool | AI systems specifically. Designed to fill the governance gap that information security standards do not address |

AI-specific risk | Not addressed. Covers information security risk but not AI risks such as model drift, biased outputs, opaque reasoning or unintended automation | Core focus. Requires organisations to identify, assess and mitigate AI-specific risks throughout the deployment lifecycle |

Human oversight | Not specifically addressed | Explicit requirement. Organisations must define where human oversight is required and how it is enforced |

Transparency of AI reasoning | Not addressed | Addressed. Organisations must be able to explain AI system behaviour and output to affected parties |

AI policy | Not specifically required | Required. Organisations must establish and maintain an AI policy covering the purpose, scope and governance of AI use |

Does ISO 27001 cover this? | Yes. ISO 27001 remains relevant for the data security dimension of AI deployments | ISO 27001 does not substitute for ISO 42001. Both apply simultaneously to an AI deployment that processes personal data |

IN SHORT: ISO 27001 covers information security. ISO 42001 covers AI governance. Having ISO 27001 does not mean you have addressed ISO 42001's requirements. For AI deployments, both standards apply — to the security of the data and to the governance of the AI itself.

What ISO 42001 requires: five areas a council must address

1. An AI policy

ISO 42001 requires organisations to establish, document and maintain an AI policy. This policy sets out the organisation's approach to AI, its purpose, scope, the principles it will apply, the oversight it will maintain and the accountability it will establish. The AI policy is the governing document that all AI deployments sit beneath.

What this means for a council: Establish a corporate AI policy before any AI deployment goes live. The policy should cover: what AI will and will not be used for, who is responsible for AI governance, how AI deployments will be approved and reviewed, and how the organisation will ensure compliance with applicable standards. The policy must be approved at a senior level and communicated to relevant staff.

Arto: Arto's Assurance Designer includes a policy linkage section where the organisation's AI policies are registered and linked to individual workflows. This creates the documented connection between the corporate AI policy and each specific deployment that ISO 42001 requires. The Policy Engine in Arto provides visibility of which policies apply to which flows.

2. AI risk assessment

ISO 42001 requires a structured risk assessment for each AI deployment, addressing risks specific to AI rather than just the information security risks covered by ISO 27001. AI-specific risks include model inaccuracy, biased outputs, lack of transparency in AI reasoning, over-reliance on AI outputs, and the risk of AI being used outside its intended scope.

What this means for a council: Before deploying any AI workflow, conduct a documented risk assessment covering AI-specific risks in addition to the standard information security and data protection risk assessment. Identify the specific AI risks for this workflow, assess their likelihood and impact, and document the mitigation measures in place. The risk assessment should be reviewed if the workflow changes or if new risks are identified in operation.

Arto: Arto's Assurance Designer provides a pre-populated risk assessment framework for each Arto Supported Flow, covering AI-specific risks. The KSB profile for each workflow constrains the AI agent to its defined scope, directly mitigating the risk of AI operating outside its intended boundaries. The monitoring dashboard surfaces runtime anomalies that may indicate emerging risks.

3. Human oversight

ISO 42001 requires organisations to define where human oversight is necessary in their AI deployments and to implement that oversight in a way that is documented and enforceable. The standard recognises that AI systems should not operate without appropriate human accountability for the decisions they inform or make, particularly where those decisions affect individuals.

What this means for a council: For each AI workflow, define the points at which human review and approval are required. Document who is responsible for oversight at each point. Ensure that the oversight is technically enforced, not just expected, so that the AI cannot proceed past a decision point without human sign-off. Record every oversight decision with the name of the responsible officer and the timestamp.

Arto: Arto's HITL Control Centre enforces human oversight at defined decision points in every workflow. The workflow cannot proceed past a HITL gate without officer review and recorded sign-off. Every decision is attributed to a named officer with a timestamp, providing the documented, enforceable oversight record that ISO 42001 requires.

4. Transparency of AI systems

ISO 42001 requires organisations to maintain transparency about their AI systems, both internally (staff and management understanding what the AI does) and externally (being able to explain AI behaviour to affected individuals and oversight bodies). This connects directly to the right to explanation under UK GDPR and the transparency requirements of the UK Government AI Playbook.

What this means for a council: Document what each AI workflow does, what data it uses, what decisions it informs and how it reaches its outputs. Be prepared to explain AI system behaviour to affected residents, to the ICO, to internal audit and to elected members. This does not require technical explainability of model internals, it requires a plain-language account of what the AI does and what it produces.

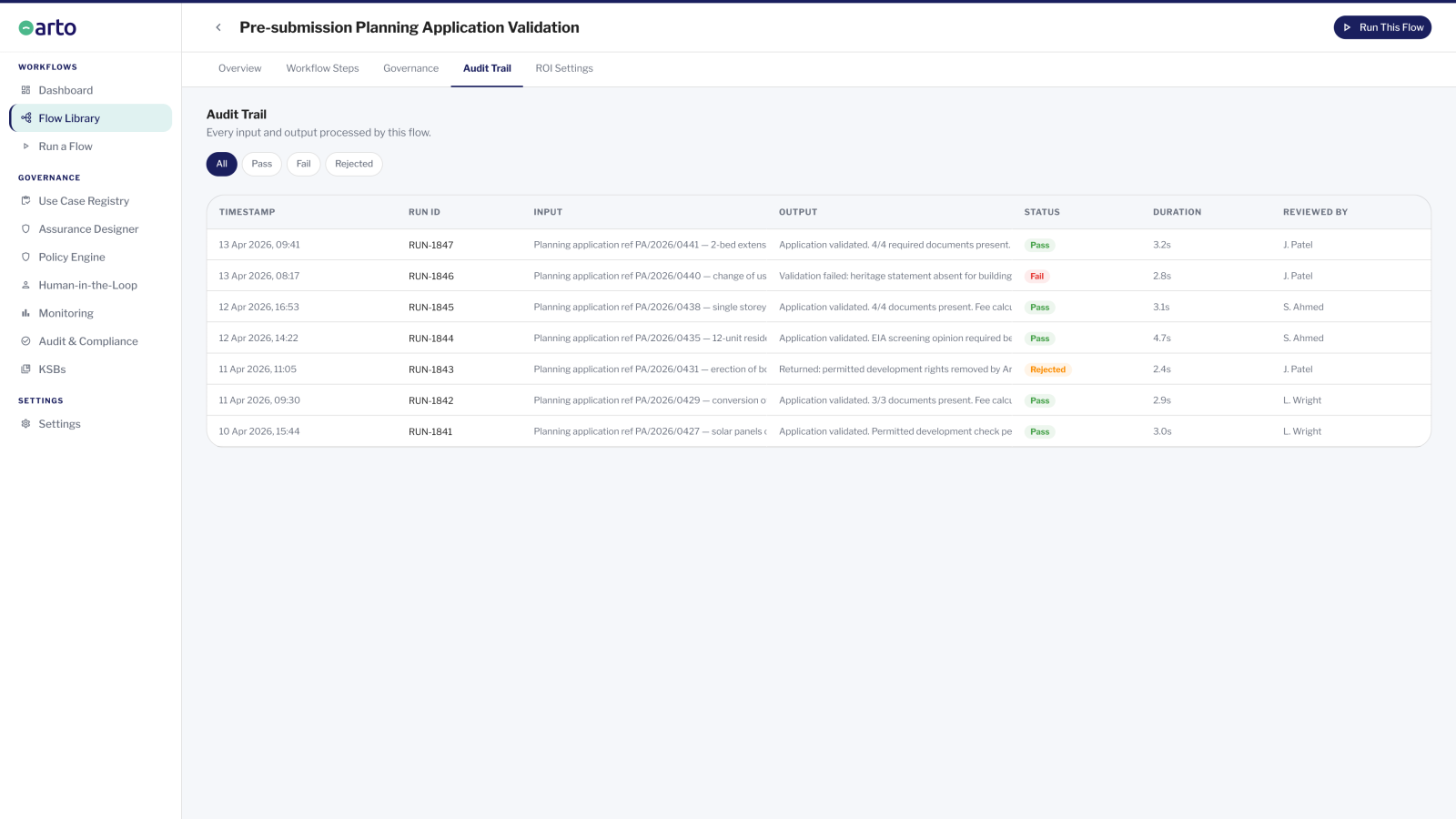

Arto: The audit trail in Arto captures the AI reasoning and output for every workflow execution, creating the technical transparency record that can underpin explanations to affected individuals or oversight bodies. The Assurance Designer documents the workflow's scope and purpose, supporting internal transparency requirements.

5. Continual improvement

ISO 42001 requires organisations to monitor AI deployments over time, review their performance, identify improvements and implement them. AI systems do not remain static, the data they process changes, the context in which they operate changes, and the risks they present may change. A management system standard requires an ongoing improvement cycle, not a one-time compliance exercise.

What this means for a council: Establish a review schedule for each AI deployment. The review should cover performance against the original purpose, changes to the risk profile, compliance with current standards, and any issues identified in operation. Document the reviews and any actions taken. ISO 42001 requires evidence of continual improvement — sporadic review without documentation does not satisfy the requirement.

Arto: Arto's Monitoring dashboard provides the ongoing performance data needed for review cycles: run volumes, outcome distributions, governance scores and active alerts. The organisation governance score reflects the current completion state of assurance records across all active flows, signalling where review or update is needed. The Assurance Designer maintains a structured record that can be updated as deployments evolve.

Is ISO 42001 mandatory for UK public sector organisations?

ISO 42001 is not currently a legal requirement for UK public sector organisations. No legislation mandates ISO 42001 compliance, and the ICO has not made it a regulatory requirement.

However, ISO 42001 is rapidly becoming the expected standard of practice for responsible AI management, and its absence is increasingly noticed. UK central government procurement frameworks are beginning to reference ISO 42001 compliance as a consideration in AI-related contracts and supplier assessments. Local government procurement — particularly for higher-risk AI applications in social care, safeguarding and financial services — is likely to follow. Councils that are procuring AI platforms are also likely to ask their suppliers about ISO 42001 alignment, even if they are not yet ISO 42001 certified themselves.

The more useful framing is not 'must we comply?' but 'what does ISO 42001 alignment demonstrate?' The answer is that it provides independently recognised evidence that AI is being managed systematically — not as a one-off project, but as a managed and improving capability with documented governance, defined oversight and a commitment to continual review. That evidence is increasingly valuable in procurement, audit and public accountability contexts, regardless of whether certification is formally required.

ISO 42001 alignment tells procurement bodies, auditors and the public that AI is being managed as a serious organisational responsibility, not treated as a technology project that happens to use AI.

How Arto supports ISO 42001 compliance for AI deployments

Arto's governance architecture directly supports the key requirements of ISO 42001. The Policy Engine and Assurance Designer support the AI policy and risk assessment requirements. The HITL Control Centre enforces and records human oversight. The audit trail captures AI reasoning and output. The Monitoring dashboard provides the ongoing performance data that continual improvement requires.

For Arto Supported Flows, the technical governance record is generated automatically from the first run: the KSB profile, pre-populated Assurance Designer sections, governance certificate, audit trail and Monitoring dashboard. The council's evidence base for ISO 42001 alignment is substantially built before the DPO assessment begins.

Where to go from here

See how governance works in practice

A step-by-step walkthrough of how all five ISO 42001 requirements operate in a live Arto workflow.

How It WorksSee all four governance standards

How ISO 42001, UK GDPR, the OECD Principles and the AI Playbook work together as Arto's governance foundation

AI Governance StandardsGet ISO 42001 alignment in your context

Speak with the Arto team to walk through how the platform satisfies ISO 42001 requirements for your specific service area.

Book a governance review