The OECD AI Principles are five internationally agreed standards for responsible AI, adopted in 2019 by member countries of the Organisation for Economic Co-operation and Development, including the United Kingdom. They were the first international framework for AI governance and remain the foundation on which the UK's own AI governance approach is built.

For UK public sector organisations, the OECD principles matter because they are the source from which everything else flows. The DSIT AI Principles are a UK domestic adaptation of the OECD framework. The UK Government AI Playbook translates those principles into operational practice. When a council follows the AI Playbook, it is expressing, in practical form, commitments that originate in internationally agreed values established at the OECD.

The five principles cover: inclusive growth and well-being, human-centred values and fairness, transparency and explainability, robustness and security, and accountability. Taken together, they describe what it means for an AI deployment to be responsible: not technically compliant with a specific rule, but genuinely aligned with the values that responsible AI practice requires.

IN SHORT: The OECD AI Principles are the international ethical foundation for AI governance. The UK's DSIT principles and AI Playbook both derive from them. Understanding the OECD principles explains why UK AI governance frameworks say what they say.

Where UK AI governance comes from: the OECD to Playbook lineage

Layer 1 (International) OECD AI Principles

Five principles for responsible AI agreed by member countries in 2019 and updated in 2024. Sets the international ethical baseline: what responsible AI requires as a matter of shared global values.The foundation. Adopted by the UK when it signed the OECD AI Principle

Layer 2 (UK domestic) DSIT AI Principles

The UK government's domestic expression of the OECD principles, published by the Department for Science, Innovation and Technology. Adapts the international baseline to UK regulatory context and public sector obligations.Directly derived from the OECD framework. Not a separate set of values: an adaptation of the same values for UK context.

Layer 3 (Operational) UK Government AI Playbook

Ten operational principles for public servants deploying AI, derived from DSIT and OECD frameworks. Translates values into practical guidance: what to do, not just what to value.The operational expression. When councils follow the Playbook, they are applying OECD values in practice.

This layered structure is why the four governance frameworks that Arto embeds are coherent rather than contradictory. UK GDPR provides the legal data protection framework. ISO 42001 provides the technical AI management framework. The OECD Principles and AI Playbook provide the ethical and operational framework. All four address different dimensions of responsible AI deployment and all four derive their legitimacy from a common set of internationally agreed values.

When a council follows the UK Government AI Playbook, it is not following an arbitrary set of government guidelines. It is expressing, in practical form, an internationally agreed commitment to responsible AI that the UK government made when it adopted the OECD AI Principles.

The five OECD AI Principles explained for public sector

Principle 1 Inclusive growth, sustainable development and well-being

AI should be designed and deployed in ways that benefit people broadly. For public sector organisations, this principle establishes that AI must serve the public interest: improving outcomes for residents, reducing inequality in service access, and contributing to the well-being of the communities the organisation serves.

What this means for your council:

Every AI deployment in a council should be able to answer the question: who benefits from this, and how? The answer should be centred on residents and service users, not just on operational efficiency. An AI workflow that saves officer time while simultaneously improving the consistency and speed of a service residents receive is aligned with Principle 1. One that saves officer time but degrades the resident experience is not.

How Arto aligns:

Arto's deployment model is outcomes-focused. Each Arto Supported Flow is built around a specific service improvement for residents alongside the operational efficiency benefit. The ROI dashboard tracks both staff time saved and service delivery improvements, keeping both dimensions visible throughout the deployment lifecycle.

Principle 2 Human-centred values and fairness

AI must respect human rights, democratic values and the rule of law. It must be designed to avoid discrimination and ensure fair treatment for all individuals. For public sector organisations, this principle connects directly to the legal equality obligations that councils already hold under the Equality Act 2010: AI deployments must not introduce or amplify discriminatory outcomes.

What this means for your council:

Before deploying any AI workflow, conduct an equality impact assessment to identify whether the AI could produce differential outcomes for protected characteristic groups. Where the AI uses data that reflects historical patterns of discrimination, the model must be assessed and, where necessary, adjusted. Document the equality assessment and include it in the DPO submission. Principle 2 is not satisfied by asserting that the AI is neutral; it requires demonstrated fairness.

How Arto aligns:

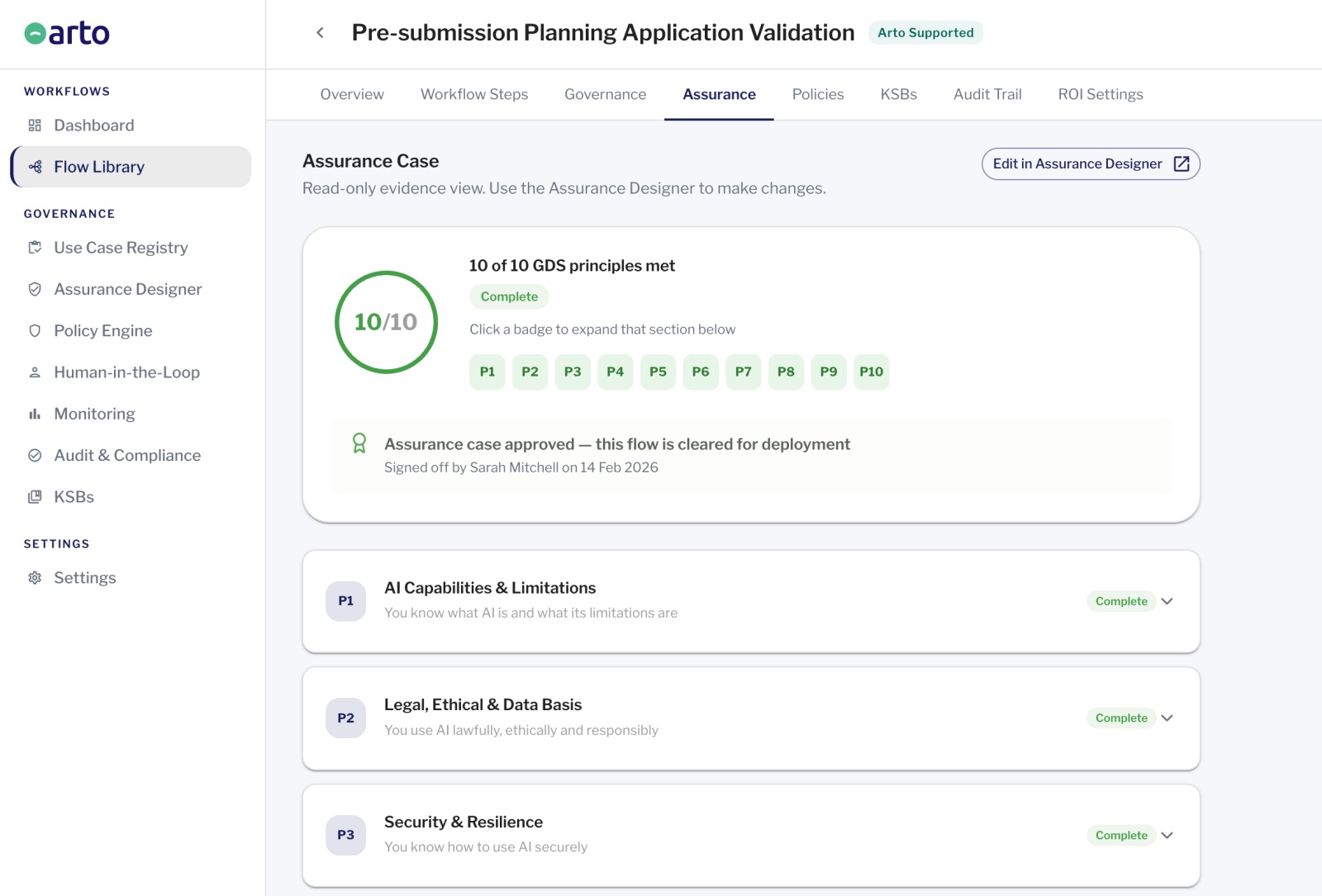

Arto's Assurance Designer includes an equality considerations section as part of the pre-deployment governance record. The Assurance Designer record confirms that the workflow is operating within its defined scope: a scope that has been assessed for equality implications before deployment.

Principle 3 Transparency and explainability

Individuals should be informed when AI is involved in decisions that affect them, and organisations should be able to explain AI behaviour in terms meaningful to affected parties. This connects directly to UK GDPR transparency obligations and the right to explanation for automated or AI-assisted decisions. It also connects to the accountability obligations of public sector organisations, which are expected to be open about how they make decisions.

What this means for your council:

Be able to explain in plain language what any AI system your council deploys does, what data it uses, what decisions it informs and what the AI's role was in any specific case. This is not a requirement for technical transparency of model internals. It is a requirement for institutional transparency: the council should be able to give an honest account of how AI is used in its services to residents, elected members and oversight bodies.

How Arto aligns:

Arto's audit trail captures the AI reasoning and output for every execution. The audit trail confirms AI involvement in each run. This record underpins the transparency communications that Principle 3 requires, providing the evidence base for explaining AI involvement to any individual or oversight body that asks.

Principle 4 Robustness, security and safety

AI systems must be safe, secure and reliable. They should perform consistently as intended, be protected against adversarial interference, and have safeguards in place to identify and address failures. For public sector AI, where decisions can directly affect the welfare of residents, an AI that produces incorrect outputs in safety-critical contexts is not acceptable.

What this means for your council:

Test AI workflows thoroughly before live deployment. Monitor their outputs in operation and have a documented process for what happens when the AI performs incorrectly or unexpectedly. Ensure the AI system is protected against data tampering or model manipulation. Review performance regularly and have a clear escalation path when issues are identified. Principle 4 is an ongoing obligation, not a one-time check at deployment.

How Arto aligns:

Arto's Monitoring dashboard surfaces runtime anomalies, performance deviations and active governance alerts in real time. The HITL Control Centre prevents unsafe AI outputs from being acted upon without officer review. The test run before live activation confirms that the workflow performs as specified before any resident data is processed.

Principle 5 Accountability

There must be clear accountability for AI systems and the decisions they inform or make. Organisations that deploy AI are responsible for how it behaves, and individuals must be able to seek redress if AI causes them harm. For public sector organisations, accountability is not just an ethical obligation: it is a legal and democratic one. Elected councils are accountable to their residents for every decision made in their name, including those informed by AI.

What this means for your council:

Establish and document a clear accountability structure for every AI deployment before it goes live: who is the senior responsible officer, who owns the governance of this specific workflow, and which officer is accountable for each AI-assisted decision. Ensure that accountability cannot be diffused. 'The AI decided' is not an acceptable response to a challenge or complaint. The officer who reviewed the AI output and made the decision is accountable for that decision.

How Arto aligns:

Arto's HITL Control Centre attributes every AI-assisted decision to a named officer with a timestamp. The accountability structure is documented in the Assurance Designer before deployment begins. The audit trail provides the permanent, exportable record of accountability that a public sector organisation needs to demonstrate to residents, elected members and oversight bodies.

The DSIT AI Principles and their relationship to the OECD framework

The Department for Science, Innovation and Technology has published AI principles for the UK that are often referenced in public sector AI guidance. These DSIT principles are sometimes treated as a separate framework alongside the OECD principles, which creates unnecessary confusion.

The DSIT AI Principles are not separate from the OECD AI Principles. They are a UK domestic adaptation of the same framework, expressing the same values in language and context appropriate for UK public sector organisations. The DSIT principles cover safety, security, fairness, transparency, accountability and contestability: these are the OECD principles expressed in UK policy language.

Understanding this relationship removes a layer of complexity from AI governance conversations. Organisations do not need to satisfy the OECD principles and the DSIT principles as separate compliance exercises. They are one set of values expressed at two levels. Satisfying the AI Playbook's operational requirements is the practical expression of satisfying both.

IN SHORT: The DSIT AI Principles are a UK adaptation of the OECD framework, not a separate compliance requirement. The AI Playbook is their operational expression. The three represent one coherent set of values at three levels of abstraction.

How Arto's governance framework aligns with the OECD AI Principles

Arto's governance architecture operationalises all five principles. The outcomes-first workflow design and ROI tracking support Principle 1. The Assurance Designer's equality considerations section supports Principle 2. The audit trail and Assurance Designer record meet Principle 3's transparency requirement. The Monitoring dashboard, anomaly detection and HITL gate address Principle 4's robustness requirement. Named officer attribution on every AI-assisted decision structurally enforces Principle 5.

The OECD principles ultimately describe commitments that an organisation must hold, not just technical features a platform can switch on. The council's commitment to serving residents equitably, its equality impact assessment process and its accountability culture are organisational responsibilities that Arto's infrastructure supports but cannot replace.

Where to go from here

The UK Government AI Playbook

The operational expression of the OECD principles: 10 principles explained in plain language for councils.

AI Playbook principlesThe AI Governance Foundation

How UK GDPR, ISO 42001, the OECD Principles and the AI Playbook work together as Arto's governance foundation.

The AI Governance FoundationDiscuss your governance requirements

Speak with the Arto team to understand how the OECD principles apply to your specific service area deployment.

Book a governance review