The three categories of AI reputational risk councils face

Reputational risk from AI in public sector does not usually come from the AI making a dramatic error. It comes from an organisation being unable to account for what the AI did, or from the process that surrounded the AI not meeting the standards the public and regulators expect of public decision-making. Three categories cover most of the cases.

Category 1 - Operational failure

Scenario: An AI system produces a wrong output (an incorrect benefits calculation, a flawed validation decision, a triage summary that missed a significant risk indicator) and a resident is affected. The story reaches the press or a councillor.

Reputational exposure

If the organisation cannot produce a record of what the AI did, what it recommended, and what the officer decided, it cannot demonstrate that the process was appropriate. The absence of a clear record turns an operational error into an accountability failure. Ombudsman investigations routinely find that inadequate record-keeping, not the original error, is what made the situation indefensible.

Governance remedy

An immutable audit trail that records every workflow execution: what data was processed, what the AI output was, who reviewed it, what they decided, and when. If an error occurred, the record shows where. If the officer exercised appropriate judgement, the record shows that too. A documented error handled correctly is defensible. An undocumented error is not.

Category 2 - Process failure

Scenario: A resident, a journalist, or a councillor asks how a decision was reached that was influenced by AI. The organisation cannot explain what role the AI played, cannot produce the record of human oversight, or cannot demonstrate that the resident's data was handled appropriately.

Reputational exposure

Opacity about AI use in public services is increasingly unacceptable to the public, regulators, and elected members. Councils that cannot explain their AI processes: which decisions AI influenced, what safeguards were in place, how residents can challenge an AI-assisted outcome, face reputational exposure not because something went wrong but because they cannot demonstrate that it went right. The Local Government Ombudsman has increasingly expected councils to be able to provide records of how decisions affecting residents were reached.

Governance remedy

Documented governance for every AI deployment: a record of what the AI does in each workflow, what oversight is applied, and how residents can access information about decisions that affected them. Subject access requests can be satisfied from the audit trail. A resident asking how their benefits decision was reached can be shown the full record. Scrutiny committee questions can be answered with evidence, not assurance.

Category 3 — Governance failure

Scenario: An AI deployment was made without a completed DPIA, without DPO sign-off, without an equality impact assessment, or without senior responsible officer accountability. An ICO investigation, an Ombudsman complaint, or a press enquiry reveals the gap.

Reputational exposure

Retrospective governance failures are among the most damaging for councils because they suggest not just that something went wrong, but that the organisation did not follow its own rules before it deployed. The ICO has taken enforcement action against public sector organisations for AI-related data protection failures. The Ombudsman has upheld complaints where AI use was found to be inadequately governed. Elected members asked to account for these failures face the most difficult public position: the organisation deployed AI without proper approval.

Governance remedy

Pre-deployment governance: a completed DPIA for high-risk processing, DPO sign-off, an equality impact assessment, and documented senior responsible officer accountability. All of this must be in place before the first live workflow run. This cannot be done retrospectively in a way that satisfies a regulatory investigation. It must exist before deployment.

In short: Reputational risk from AI comes from operational failure you cannot account for, process opacity you cannot explain, and governance gaps discovered after the fact. All three are addressed by the same governance architecture: documented pre-deployment approval, enforced human oversight, and an immutable audit trail from day one.

Why AI incidents become reputational crises, and why most do not have to

When AI-related incidents in public services reach the press, a scrutiny committee, or a regulatory body, the story is almost never simply 'AI made an error.' AI makes errors; so do humans. What turns an error into a crisis is the organisation's inability to give a coherent account of what happened.

The questions that regulators, journalists, and elected members ask after an AI incident follow a consistent pattern: What exactly did the AI do? Who was responsible for the decision? Did an officer review the AI output before it was acted on? Was the resident's data handled appropriately? Was the deployment approved through the right channels? If the organisation cannot answer these questions from a documented record, the vacuum fills with the worst available assumption.

Conversely, organisations that have maintained a complete governance record from deployment are in a fundamentally different position when an incident occurs. They can produce the audit trail. They can show that an officer reviewed the output. They can demonstrate that the DPIA was completed and the DPO signed off. They can confirm that the equality impact assessment was done. An incident investigated with a complete record is handled as an operational matter. Without a record, it becomes a governance failure that is both more serious and harder to resolve.

The councils that face the most difficult reputational positions over AI are almost always those that deployed AI quickly, using readily available tools and without the pre-deployment governance work, and only encountered the governance question when a complaint or investigation required them to produce documentation that did not exist.

Who is responsible if AI gets something wrong in a council?

When AI is used to support a public sector decision and something goes wrong, accountability rests with the named officer who made the final decision, not with the AI system and not with the platform provider. This is both the legal position and the governance design principle.

The officer reviews the AI output, applies professional judgement, and records their decision. That decision is attributed to them by name and timestamp, in an audit record that is permanent. If the decision was challenged in an Ombudsman investigation, a judicial review, or a scrutiny committee hearing, the record shows what the officer decided and when. The AI's output is also in the record, as the input to the officer's decision, not as the decision itself.

This is why well-designed AI governance is protective of officers, not threatening to them. An officer who reviews an AI output and makes a documented professional decision is in a strong position if that decision is later challenged. An officer who approves an AI output without genuine review, or without a record of that review, is in a weak position: not because the AI made a mistake, but because the accountability record does not show that the officer exercised proper judgement.

The senior responsible officer's role

Above the individual officer making case-level decisions, every AI deployment requires a named senior responsible officer. The SRO does not review individual cases. They hold accountability for the governance of the deployment overall: that the DPIA was completed, that the DPO signed off, that the equality impact assessment was done, that the deployment was properly approved, and that the ongoing governance of the workflow meets the required standard.

If a governance failure is found (the DPIA was not completed, the DPO was not consulted, the deployment was not properly approved) the accountability for that failure sits with the SRO. This is why the SRO role must be filled by someone with the authority and the capacity to ensure the governance work is done, not assigned as a formality.

For a service lead considering an AI deployment, the question 'who is responsible if something goes wrong?' has a clear answer that is also a preparation checklist: make sure the DPIA is complete, make sure the DPO has signed off, make sure the SRO is properly designated, make sure the audit trail exists from day one. With these in place, accountability is clear and defensible. Without them, the question of who is responsible becomes much harder to answer.

Explaining AI use to residents, elected members, and scrutiny

Reputational risk is not only about what happens when something goes wrong. It also comes from the perception that AI is being used in ways that are opaque, unaccountable, or disrespectful of residents' rights. Proactive transparency means communicating clearly about what AI does, what it does not do, and how decisions can be challenged, is itself a risk mitigation strategy.

Audience | What they need to know | How to provide it |

Residents | What role AI played in a decision that affected them. How to request that information. How to challenge the decision. Whether their data was used appropriately. | Privacy notice updated to describe AI use. Subject access requests can be responded to using the audit trail. Appeals and complaint processes unaffected. AI assistance does not remove the right to challenge. Decision letters explain that AI assisted the officer's review where relevant. |

Elected members | What AI the council is using and where. What governance is in place. What happens if something goes wrong. Whether the council is legally exposed. | A summary of active AI deployments from the Use Case Registry. The governance framework applied to each deployment. The DPO sign-off and DPIA status for high-risk processing. Clear accountability structure naming the SRO for each deployment. |

Scrutiny committee | What outcomes AI is producing. Whether there is evidence of differential impact across resident groups. What officer oversight is applied. How the council would identify and correct an error. | The Monitoring dashboard's outcome distribution data shows what proportion of cases are handled automatically vs referred for officer review. Equality impact assessment findings. The audit trail as evidence of consistent process. Incident response procedure showing how errors are identified and corrected. |

Press / media | Whether residents are being treated fairly. Whether AI is making decisions without oversight. Whether the organisation can account for specific cases. | The governance record: the officer named in the audit trail made the decision, not the AI. The DPIA was completed, the DPO signed off. If asked about a specific case, the audit trail provides the record of what happened. Pre-prepared holding statement acknowledging AI use, describing the governance framework, and confirming oversight. |

Transparency is not primarily about drafting communications. It is about having the record that supports them. An organisation that has maintained complete governance documentation from the first workflow run is in a position to answer any of these audiences accurately and promptly. An organisation that has not maintained the documentation faces the same transparency questions without the evidence to answer them.

What AI governance specifically does to reduce reputational risk

The governance practices that address reputational risk are not complex, but they must be in place before deployment, not assembled retrospectively when a complaint arrives.

- A completed DPIA before any live processing of resident data, demonstrating that the risks were assessed, that the legal basis for processing was established, and that the safeguards were documented. This is the document that answers 'did you think about this before you did it?'

- DPO sign-off confirming that the data protection position is sound. A DPO who has reviewed and approved the deployment is both a governance assurance and a visible accountability structure that regulatory bodies recognise.

- An equality impact assessment demonstrating that the differential impact of the AI system across protected characteristics was assessed and mitigated. This answers the fairness question before it is asked.

- A named senior responsible officer who holds accountability for the governance of the deployment. A named person, not a team or a role title, whose responsibility is documented.

- Human oversight enforced at decision points, with officer decisions attributed by name in the audit trail. This answers 'did a human decide this?' and 'who decided it?' simultaneously.

- An immutable audit trail from the first day of deployment: timestamped, searchable, exportable. This answers almost every accountability question after the fact.

These six practices together produce an organisation that can answer any reasonable question about its AI deployments: what it approved, what it assessed, who is responsible, what the AI did in any specific case, and what the officer decided. The reputational risk of AI in local government is almost entirely concentrated in organisations that are missing one or more of these practices.

How Arto addresses the governance practices that protect reputational risk

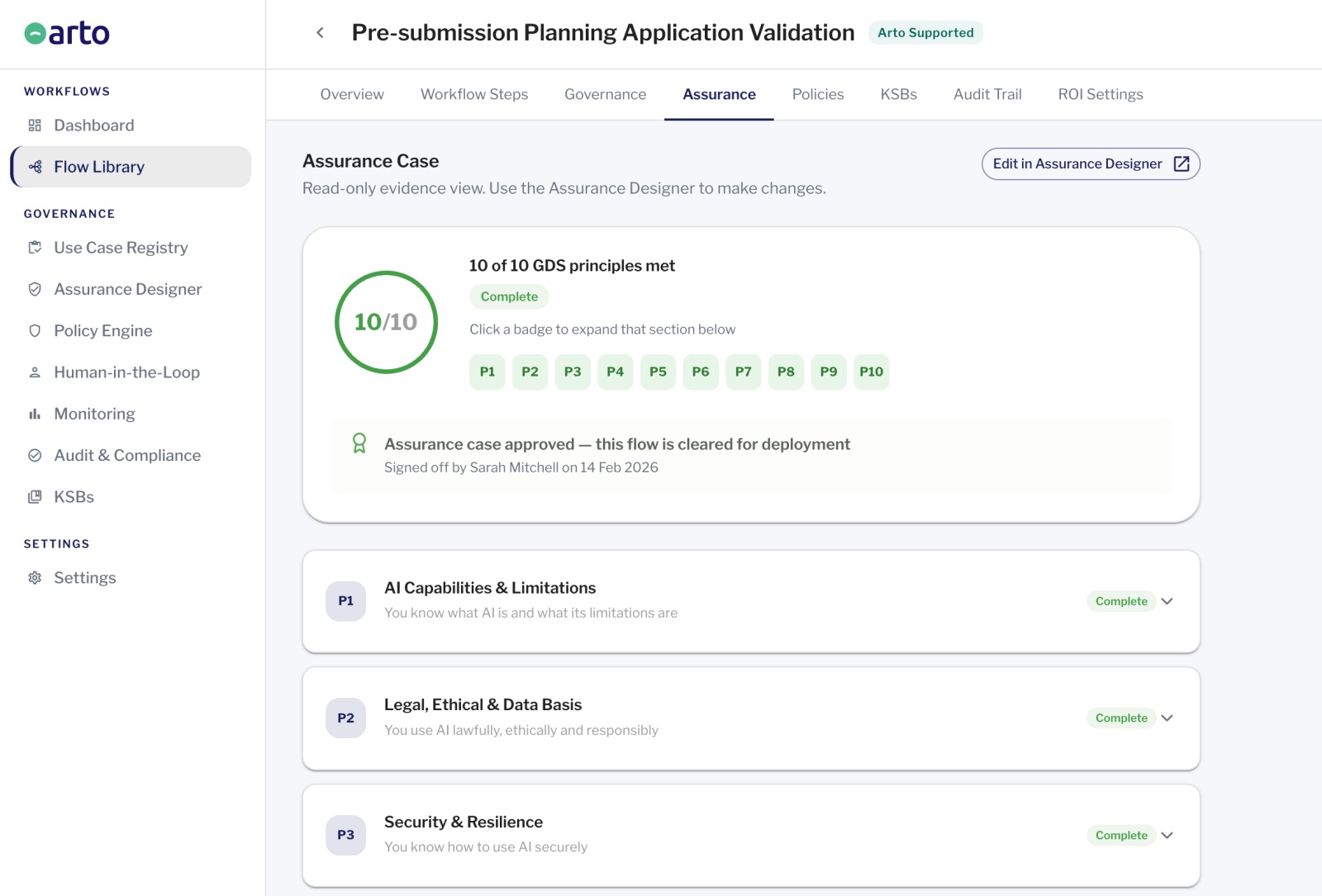

The six governance practices described above are the ones that protect councils from AI reputational risk. Arto operationalises each of them for Arto Supported Flows, not as optional configurations but as structural features of how the platform works.

- DPIA support: pre-populates the technical DPIA sections. The organisation completes the risk narrative.

- DPO sign-off process: produces the documentation package a DPO needs, with test run audit trail as evidence.

- Equality impact: includes sections for equality impact assessment alongside the technical governance record.

- Named SRO: records the designated senior responsible officer by name in the permanent governance record.

- Human oversight enforced: HITL gates pre-configured at decision points. Officer decisions attributed by name and timestamp.

- Immutable audit trail: generated automatically from day one. Searchable, exportable, available immediately.