What this question is really asking

The question 'can councils legally use AI for decision making?' is rarely a simple legal query. It is usually one of three things.

Is AI going to be blocked by our legal or governance team?

The answer depends on how the AI is deployed. AI that replaces officer judgement, processes data without a lawful basis, or produces decisions that cannot be explained or challenged is likely to be blocked, and rightly so. AI that supports officer judgement, operates within a documented governance framework, and comes with pre-assembled compliance evidence can be approved.

What is the legal framework I need to understand?

Legal advisors, monitoring officers and governance leads asking this question need a clear account of the applicable legal framework: which areas of law apply, what they require, and where the exposure is.

Is the organisation already exposed?

Chief executives and directors who have seen headlines about AI-related legal challenges will sometimes ask this question as a risk assessment question: are we doing something that makes us legally vulnerable? The answer is: organisations that have deployed AI without appropriate governance are potentially exposed. Organisations that have deployed AI with the governance conditions described below are in a much stronger position.

IN SHORT: The legal answer to the primary question is yes. The governance conditions that make a yes answer correct in practice are the same governance requirements that well-designed AI platforms are built around.

The four areas of law that apply to AI decision making in councils

Four distinct areas of law are relevant to AI use in council decision making. They do not all apply in the same way to every deployment, but any council using AI to inform or support decisions affecting residents should understand all four.

1. Public law and the rationality obligation · Wednesbury unreasonableness · judicial review

Councils exercise statutory functions and make decisions that affect residents' legal rights and entitlements. Those decisions must be lawful, rational and procedurally fair. A decision that was reached by mechanically following an AI output, without an officer applying genuine professional judgement to the recommendation, could be challenged as irrational or procedurally unfair in a judicial review. The AI does not make the decision. The officer makes the decision. If that distinction is not maintained in practice, the decision may not be lawful.

Legal exposure without governance

An officer who simply approves whatever the AI recommends without genuine independent review has arguably not made a decision at all. If that results in a decision a reasonable authority would not have reached, it is potentially vulnerable to judicial review on Wednesbury grounds.

What good governance provides

Human-in-the-loop gates that require genuine officer review before any decision proceeds, not a rubber stamp but recorded professional judgement. An audit trail that shows what the AI recommended and what the officer decided, attributed by name and timestamped. Where the officer's decision differs from the AI recommendation, the reason should be recorded.

2. UK GDPR Article 22 · Solely automated decisions · significant legal effects

Article 22 of UK GDPR restricts the use of solely automated processing that produces decisions with significant legal or similarly significant effects on individuals. A council tax reduction decision, a housing allocation decision, or a social care assessment outcome all fall within this category. The restriction is on 'solely automated' decisions, decisions where no human being has meaningfully reviewed and determined the outcome. Most well-designed AI workflows in public sector explicitly avoid this by enforcing human oversight at the decision point, which means Article 22's stricter requirements do not apply in the same way.

Legal exposure without governance

An AI system that processes resident data and produces a decision affecting their legal rights or entitlements, without human review, triggers Article 22 restrictions. Without a valid legal basis under Article 22(2), that decision is unlawful. ICO enforcement action and individual compensation claims are possible consequences.

What good governance provides

Human-in-the-loop oversight enforced at the decision point, not optional, not bypassable. The AI output is an input to the officer's decision, not the decision itself. The DPIA should assess whether the processing amounts to solely automated decision making and document the safeguards in place. See also the UK GDPR and AI page.

3. The Equality Act 2010 and the Public Sector Equality Duty · Indirect discrimination · s.149 PSED

AI systems trained on historical data can embed historical discrimination. They can also use features that are proxies for protected characteristics. Postcode, for instance, can be a proxy for ethnicity; benefit status can be a proxy for disability. If an AI system produces outcomes that differ systematically across protected groups, and if the council deploys that system without assessing or mitigating the differential impact, the council may be in breach of the Equality Act 2010. The Public Sector Equality Duty under s.149 requires public bodies to have due regard to equality considerations in their functions. For AI this means: understanding what the system does, assessing its differential impact, and documenting that assessment.

Legal exposure without governance

Deploying an AI system that produces systematically different outcomes for residents with protected characteristics, without an equality impact assessment and documented mitigation, could constitute indirect discrimination and breach the Public Sector Equality Duty. Ombudsman complaints, judicial review and reputational exposure are all possible consequences.

What good governance provides

An equality impact assessment of the AI system's outputs before deployment. Documentation of the assessment, the outcomes found, and the mitigations applied. The DPIA should include equality considerations. Where differential impact is found, either the system must be adjusted, human oversight must correct for it, or the council must be able to justify the deployment on legitimate grounds.

4. Common law procedural fairness · Right to reasons · right of challenge

Public law procedural fairness requires that decisions affecting individuals significantly are made through a fair process. This includes, in appropriate cases, a right to reasons — to understand how the decision that affects you was reached. AI-assisted decisions that are opaque, where neither the resident nor the officer can explain the basis of the decision, are exposed to procedural fairness challenges. The AI does not need to be explainable at a technical model level (the resident does not need to understand the neural network). The decision does need to be explainable at the level of what information was considered and what outcome was produced, and why.

Legal exposure without governance

A decision influenced by an AI system that cannot explain its output, including where the AI's reasoning was not recorded and the officer cannot recall or reconstruct what the AI recommended, may be procedurally unfair. Ombudsman investigations routinely consider whether appropriate records were kept to enable review of how a decision was reached.

What good governance provides

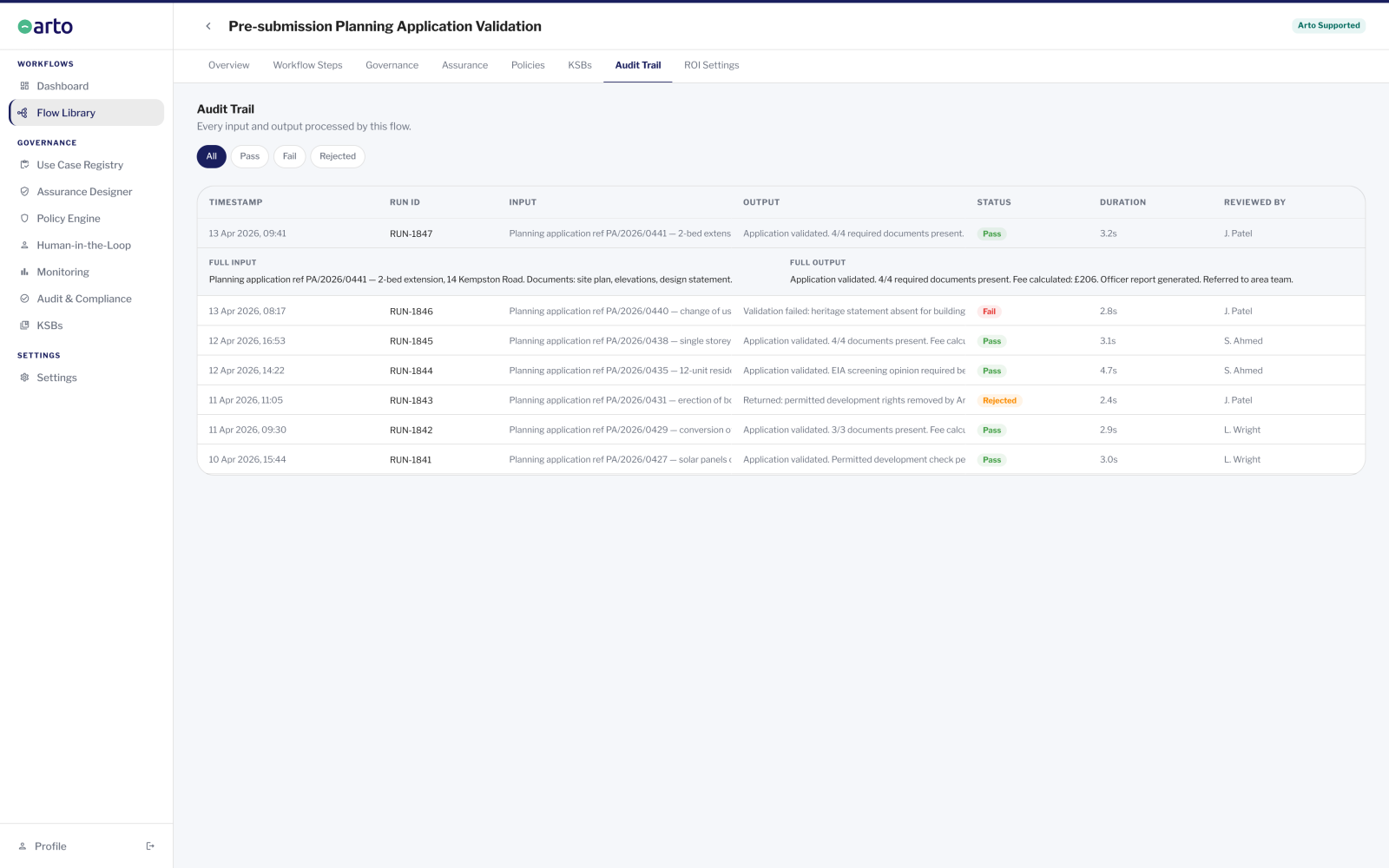

An immutable, searchable audit trail that records what the AI output was, what information it processed, and what the officer decided. Residents making subject access requests or complaints can be shown the full record of the AI-assisted process. Where the AI output was a factor in the decision, it can be explained at the level of what information the AI considered and what it recommended.

What legally sound AI deployment requires in practice

A council AI deployment that is legally sound satisfies the following requirements. These are not aspirational standards, they are the minimum governance conditions that address legal exposures.

1. Human oversight is enforced, not optional

At every point in the AI workflow where the output will inform or lead to a decision affecting a resident, a named officer must review the AI output and record their decision before the decision proceeds. This must be enforced by the system architecture, not left to individual officer discipline. The officer's review must be genuine, not a click-through approval but a substantive review in which the officer applies professional judgement. Both the AI recommendation and the officer decision should be recorded. If they differ, the reason should be noted.

2. The process is auditable and the record is complete

An immutable, timestamped audit trail must record every stage of the AI-assisted process: what triggered the workflow, what data the AI processed, what the AI output was, who reviewed it, what they decided, and when. This record must be searchable by case or by individual resident, to enable response to subject access requests, Ombudsman investigations, legal proceedings, and internal review. The record must be permanent, it should not be possible to alter or delete it after the fact.

3. The AI system has been assessed for equality impact

Before deploying any AI system in a function affecting residents, the organisation must assess whether the system's outputs differ systematically across protected groups. This means: obtaining information from the AI provider about what data the system was trained on and what validation has been done; testing the system's outputs across representative groups where possible; documenting the assessment; and either mitigating differential impact or documenting the justification for deployment notwithstanding it. The equality impact assessment should be part of, or appended to, the DPIA.

4. A DPIA has been completed and is current

A Data Protection Impact Assessment must be completed before deploying any AI system that processes personal data in a high-risk way. Most AI systems that inform decisions affecting residents meet the threshold for a DPIA. The DPIA must cover: the purpose and legal basis for processing, the data flows and data minimisation measures, the risks and mitigations, human oversight safeguards, the equality assessment, and the residual risk sign-off. The DPIA must be kept current, if the system or its use changes significantly, the DPIA should be reviewed.

5. Decisions can be explained to residents

Where a resident requests an explanation of a decision that was AI-assisted, the organisation must be able to explain what information was considered, what the AI output was, and what the officer decided and why. The explanation does not need to explain the AI model's internal workings. It needs to explain the decision at the level of factors and reasoning. The audit trail and case record are the primary source for this explanation.

IN SHORT: Legally sound AI deployment in councils is not a theoretical aspiration. It is a governance architecture: enforced human oversight, a complete audit record, an equality assessment, a current DPIA, and the ability to explain decisions. Every one of these requirements is achievable with the right platform and process.

Questions monitoring officers and legal advisors commonly ask

Does AI use in council decisions trigger Article 22 of UK GDPR?

Not automatically. Article 22 applies to 'solely automated' processing that produces decisions with significant legal effects. If a human officer genuinely reviews the AI output and makes the final determination — and that review is recorded and enforced, not a formality — the processing is not solely automated and Article 22's stricter requirements do not apply in the same way. The key test is whether the human involvement is genuine or nominal. A well-designed AI workflow with enforced HITL gates and a complete audit trail is not solely automated.

However: if the AI produces a recommendation and the officer approves it without substantive review, without reading the case, without applying professional judgement, without being capable of taking a different decision, then the human involvement may be nominal rather than genuine, and Article 22 may apply. The distinction is in the architecture and the practice, not the label.

Full Article 22 analysis

Who is legally responsible if an AI-assisted decision is wrong?

The organisation is responsible. The legal responsibility for decisions made by or on behalf of a public authority rests with that authority, not with the technology provider. This is not changed by the involvement of AI. If a decision made with AI assistance is unlawful, irrational, discriminatory or procedurally unfair, the legal responsibility lies with the council and with the officer who made the decision.

The officer who reviews the AI output and records their decision is accountable for that decision. The AI is a tool that informed the decision. The organisation is responsible for choosing to use that tool, for the governance under which it operates, and for the outcomes it produces. The audit trail that records who decided what and when is the accountability record that evidence the chain of responsibility.

Can a resident challenge a decision that was AI-assisted?

Yes. The normal routes for challenging council decisions remain available: complaint to the council, Ombudsman complaint, judicial review where the decision is amenable to public law challenge. The involvement of AI does not remove or restrict these challenge routes. It does, however, change what an effective challenge looks like: a resident challenging an AI-assisted decision will want to know what information the AI processed, what it recommended, and whether the officer's decision differed from the recommendation and why.

This is why the audit trail and the right to explanation are not just governance good practice, they are legal requirements for a decision-making process that is both challengeable and defensible. An organisation that cannot produce the AI process record in response to a challenge is in a weaker position than one that can.

Does the AI provider's liability cover us if something goes wrong?

No, in the ordinary sense. The council's own legal liability for its decisions is not transferred to the AI provider by virtue of using their platform. The AI provider may have contractual obligations, accuracy representations, data processing agreements, liability caps, but those are separate from the council's public law and data protection obligations to residents.

A data processing agreement (DPA) with the AI provider is a UK GDPR requirement where the provider processes personal data on the council's behalf. The DPA allocates processor obligations to the provider. But the council remains the data controller and retains responsibility for the lawfulness of the processing it chooses to carry out.

Who is accountable for AI-assisted decisions and how accountability is demonstrated

The accountability question, who is responsible when an AI-assisted decision goes wrong, has a clear answer in UK public law: the organisation is responsible, and the officer who made the decision is responsible. AI does not change the accountability structure for public sector decision making. It changes the process through which decisions are reached, not the responsibility for their outcomes.

This is sometimes experienced as a source of anxiety: 'if I approve an AI recommendation and it turns out to be wrong, am I responsible?' The answer is yes, and that is appropriate, because the accountability structure of public sector decision making is designed to ensure that someone is always responsible for decisions affecting residents. What AI governance does is give that responsible person the evidence and the process they need to make a defensible decision and to demonstrate that they did so.

Accountability for AI-assisted decisions works the same way as accountability for any other public sector decision. The officer who makes the decision is accountable. AI governance provides the evidence trail that shows the decision was made properly.

Demonstrating accountability for an AI-assisted decision involves three things.

- The audit record: a complete, immutable record of what the AI processed, what it recommended, and what the officer decided. This is the primary accountability document, it shows that the process was followed correctly and that a named person made the final determination.

- The decision record: the officer's recorded reasoning. Not necessarily a lengthy narrative, but a recorded professional judgement that confirms the decision was theirs and that they considered the AI output in the context of the case. Where the officer's decision differs from the AI recommendation, the reason should be noted.

- The Assurance Designer record: the pre-populated governance documentation confirming that the appropriate standards are applied and the human oversight structure is in place.

An organisation that can produce these three documents for any AI-assisted decision is in a defensible position. An organisation that cannot produce them is not, regardless of what the AI provider's terms of service say.

How Arto provides the governance architecture that legal AI use requires

Meeting the legal conditions for AI use in council decision making requires a specific governance architecture: enforced human oversight, a complete audit trail, documented compliance, and the ability to explain decisions. These are governance requirements, not technology requirements, but the right platform operationalises them at scale.

Arto is built around these requirements. Not because they were added as a compliance layer after the platform was designed, but because the platform was designed for the UK public sector context where these requirements are non-negotiable.

Where to go from here

Getting AI approved internally

How to navigate the full internal approval process with DPO, IT security, legal and senior leadership.

Get AI approvedUK GDPR and AI in detail

Article 22, lawful basis, DPIAs, data minimisation — the full UK GDPR analysis for AI in local government.

UK GDPR and AIAudit trail and accountability records

How the Arto audit trail works and what it provides for legal challenge, Ombudsman investigations and judicial review.

Audit trail