Rather than introducing AI tools and attempting to manage risk afterwards, public sector organisations should adopt a governance-first approach where every AI deployment is auditable, controlled and aligned to the applicable UK standards. This approach allows organisations to start small, prove measurable value quickly and expand safely.

The steps below set out exactly how to do this. They apply whether your organisation is considering AI for the first time or has previously seen a deployment stall.

Why starting with AI feels risky in local government

Starting with AI feels risky in local government because the consequences of getting it wrong are public, not private.

In a commercial organisation, a failed AI project costs money and time. In a public sector organisation, it can also cost public trust, generate scrutiny from the Information Commissioner's Office, produce headlines, and — in the most serious cases — affect the safety or welfare of the residents the service exists to support.

Those stakes are not imaginary. They are the reason that AI moves more slowly in public sector than in commercial markets, and they are legitimate. A service lead who is cautious about deploying AI is not being obstructive. They are being responsible.

Each of their concerns is answerable. But they are only answerable if governance is part of the design of the deployment, not something added afterwards when the concerns are raised.

The specific concerns that slow most AI projects in local government are consistent and recognisable.

-

Who is accountable if the AI produces a wrong or harmful output? The organisation, not the platform.

-

How can we demonstrate that every AI-assisted decision was lawful, fair and compliant with UK GDPR?

-

Will this be approved by our data protection officer, IT security team and information governance lead?

-

What happens if something goes wrong publicly and we cannot explain how the AI made the decision?

-

We have seen pilots fail before. What makes this different?

Why most AI projects in local government stall

Most AI projects in local government do not fail because of the technology. They stall because governance is treated as a step that comes after the technology decision, not before it.

The sequence is familiar. A service lead identifies a problem. An AI tool is selected or piloted. Work begins. At some point, often weeks or months in, the governance assessment starts. The data protection officer identifies processing concerns that were not considered at the design stage. The IT security team raises questions about the AI model that the platform provider cannot fully answer. The information governance lead requests documentation that does not exist yet. The project pauses.

At that point, fixing the governance gap requires either significant rework or abandonment. The organisation concludes that AI is too complicated for their context. The service lead moves on to other priorities. The problem the project was meant to solve remains unsolved.

The mistake is not using AI. The mistake is treating governance as something to deal with later.

What safe AI deployment looks like in practice

A safe AI deployment in local government satisfies six criteria. These criteria do not guarantee that AI will produce the right outcome in every case, no system can. They do guarantee that the organisation can account for what the AI did, why it did it and who approved it, in a way that will withstand scrutiny from any governance body.

1. Clear accountability

Every AI-assisted decision has a named officer who is responsible for it. The accountability structure is documented before deployment begins, not after something goes wrong.

2. Full audit trail

Every execution generates an immutable record of what triggered the workflow, what data was accessed, what the AI agent did and what output was produced. That record is available immediately for review.

3. Human oversight at decision points

At every point where an AI output will inform or lead to a decision that affects a resident or service user, a qualified officer reviews the output and provides a recorded sign-off before the decision proceeds.

4. UK data residency

Resident data processed in AI workflows does not leave the United Kingdom and is not used to train AI models. The legal basis for processing is established before deployment begins.

5. Compliance with applicable standards

The deployment is aligned to UK GDPR, WCAG 2.2, ISO 42001, the OECD AI Principles and the UK Government AI Playbook. This alignment is documented and evidenced, not asserted.

6. Governance visibility for IT and compliance teams

The governance and compliance leads in the organisation have a clear, current view of every AI deployment in use: what it does, what data it accesses, who has approved it and what its governance status is.

How to start using AI safely in practice: six steps

STEP 1 Define the problem, not the technology

Begin with a specific, observable problem in a specific service area. Demand that cannot be met. A backlog that is growing. A statutory obligation that is not being met consistently. The problem should be concrete enough that you can describe the current state and the desired state in two sentences each.

- Not: 'we want to use AI in our service'

- Yes: 'we receive 400 change of circumstances notifications per week and each takes 45 to 90 minutes to process manually'

- The problem definition becomes the basis for the governance assessment, the ROI baseline and the approval case

STEP 2 Identify a single, contained workflow

Match the defined problem to a single AI workflow with a clear input, a defined process and a specific output. The workflow should be narrow enough that its data requirements, decision points and outputs can all be documented before deployment begins. Avoid starting with a broad 'AI strategy' — start with one workflow in one service area.

- The workflow should have a measurable output: a validation report, a triage summary, a draft document

- It should involve a limited, defined dataset — not open-ended access to council systems

- It should have natural human review points where an officer confirms the AI output before it is acted upon

STEP 3 Build governance in before the workflow runs

Before any live data is processed, establish the governance framework for this specific workflow. This means: identifying the lawful basis for data processing, completing a data protection impact assessment, defining the accountability structure, documenting the human oversight gates and testing the workflow against non-live data to confirm it behaves as specified.

- This step is what most organisations do last. Do it first.

- The DPO, IT security team and information governance lead should be involved at this stage, not after pilot results are in

- A completed governance package at this stage makes every subsequent approval faster

STEP 4 Enforce human oversight at every significant decision point

Map every point in the workflow where the AI output will inform or lead to a decision that affects a resident or service user. At each of those points, a qualified officer must review the AI output, apply professional judgement and record their decision before the workflow proceeds. That record must be timestamped, attributed to a named officer and permanently preserved.

- AI supports the officer's decision — it does not replace it

- The officer's sign-off is the accountability record. It must exist for every significant decision.

- Human oversight gates are not optional — they are the mechanism by which the organisation retains accountability

STEP 5 Measure and report the outcome from day one

Before the workflow goes live, agree the baseline metrics: how long does the current manual process take? How many cases per week? What is the error rate or backlog size? From the first run, track time saved, cases processed and outcomes produced against that baseline. Report this data to senior leadership and governance teams within the first month.

- Early measurement does two things: it builds the evidence base for expanding the deployment and it demonstrates responsible governance to any scrutiny body

- The ROI case for expanding to other service areas is built from this data, not from promises

- Redcar and Cleveland Council reduced contact centre demand by 28% within three months of deployment

STEP 6 Expand methodically, not opportunistically

Once the first workflow is live, governed and producing measurable results, the case for expanding to a second workflow is straightforward. Use the same process: define the problem, identify the workflow, build the governance in, enforce oversight, measure. Each successful deployment makes the next one easier because the internal evidence base grows and the approval process accelerates.

- Land in one service area. Prove value. Expand across service areas.

- Every subsequent approval is easier because the proof already exists

- The governance framework established for the first workflow applies to every subsequent one

IN SHORT: Start with one workflow, in one service area, with governance built in from day one. Prove the value. Then expand.

How to get a governed AI deployment approved internally

The single most effective thing a service lead can do to accelerate internal approval is to arrive at the governance conversation with the governance questions already answered.

Governance teams do not block AI projects because they are opposed to AI. They block projects because the evidence they need to assess the risk is not available. A data protection officer presented with a completed DPIA, a documented accountability structure, a data flow map and evidence that the AI system was tested against the applicable compliance standards before deployment has very little left to assess.

Governance built in before the workflow runs, not added afterwards produces exactly that evidence package as a natural output of the deployment process.

Presenting this evidence in a single structured governance package, assembled before the approval conversation rather than in response to questions during it, reduces the number of approval cycles required. Each governance body receives what it needs. Approval proceeds.

The governance teams most likely to be involved in approving an AI deployment are:

-

The Data Protection Officer:

needs a completed DPIA, lawful basis documentation, data minimisation evidence and the audit trail architecture.

-

The IT security lead:

needs the hosting environment, data handling approach, access control model and incident response process.

-

The information governance lead:

needs the data flow map, retention schedule and evidence that third-party processors meet UK GDPR requirements.

-

Senior leadership or scrutiny committee:

needs the accountability structure, measurable outcome evidence and, where available, comparable deployment results from other councils.

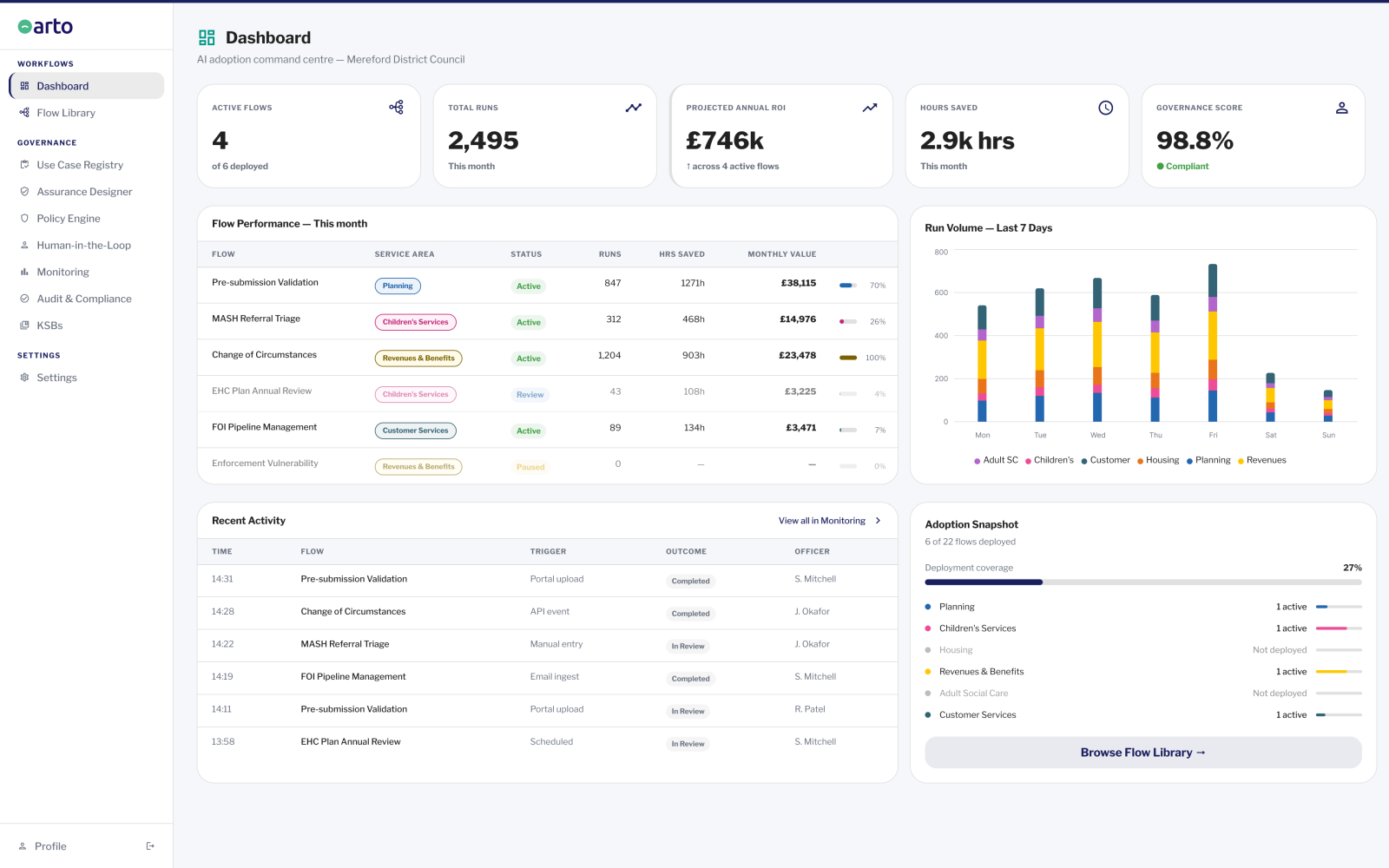

How Arto makes this approach achievable in practice

The governance-first approach described on this page is correct. For most public sector organisations, the challenge is not knowing what good looks like. The challenge is the time, expertise and coordination required to build the governance infrastructure from scratch for every new AI deployment.

Arto carries the majority of that burden at the platform level. Every Arto Supported Flow is designed and built to align with key standards including ISO 27001, ISO 42001 and UK GDPR. An assurance case aligned to the 10 GDS AI Playbook principles is pre-populated and ready to deploy. The Assurance Designer pre-populates the evidence base for DPIA supplementary evidence, IT security assessment and information governance sign-off. The audit trail is generated automatically on every run: timestamped, immutable and exportable for any governance body. Human oversight gates are enforced by the platform, so the workflow does not proceed past a decision point until the responsible officer has reviewed the AI output and recorded their decision.

The decisions about which problem to solve in which service area, and how quickly to expand, remain the organisation's. Arto provides the governance infrastructure. Everything else is the service lead's.

Arto acts as the governance layer that allows councils to deploy AI safely, without the uncertainty, risk and internal friction that typically slows adoption.

Where to go from here

Find a workflow for your service area

If you are ready to identify a specific workflow for your first deployment, browse the pre-built Arto Supported Flows by service area.

Explore workflowsMove through internal approval

If you have a workflow in mind and need to get it through governance, IT and DPO review, the getting AI approved guide covers every step.

Get AI approvedSee Arto in your service area

If you want to see how Arto handles the governance requirements for your specific service area, speak with the team.

Book a governance review